X-TUNE: Difference between revisions

From Modelado Foundation

imported>Mhall No edit summary |

imported>Mhall |

||

| Line 208: | Line 208: | ||

* Software Releases | * Software Releases | ||

** CHiLL and CUDA-CHiLL provide the autotuning compiler technology, and are available from | ** CHiLL and CUDA-CHiLL provide the autotuning compiler technology, and are available from http://github.com/CtopCsUtahEdu/chill-dev | ||

** Orio manages navigation of the autotuning search space and is available from | ** Orio manages navigation of the autotuning search space and is available from http://brnorris03.github.io/Orio | ||

** Orio-CHiLL: new module in Orio provides integration with CHiLL and CUDA-CHiLL, and is available from http://github.com/brnorris03/Orio/tree/master/orio/module/chill | |||

** SURF: new search algorithm module in Orio available from http://github.com/brnorris03/Orio/tree/master/orio/main/tuner/search/mlsearch | |||

** TCR: domain-specific tensor contraction code generation and decision algorithm for GPUs available from http://github.com/axelyamel/tcg-autotuning | |||

* Other Software Products | * Other Software Products | ||

** miniGMG application proxy: extended to include high‐order stencil implementations | ** miniGMG application proxy: extended to include high‐order stencil implementations | ||

** CHiLL and CUDA-CHiLL: new domain-specific transformations incorporated | ** CHiLL and CUDA-CHiLL: new domain-specific transformations incorporated | ||

** Orio: new search algorithm incorporated | ** Orio: new search algorithm incorporated and integration with CHiLL and CUDA-CHiLL | ||

** NWCHEM kernels: representative tensor computations released | ** NWCHEM kernels: representative tensor computations released | ||

** PBound: new decision algorithm and integration with CHiLL | ** PBound: new decision algorithm and integration with CHiLL | ||

** OCTOPI: tensor contraction domain-specific framework | ** OCTOPI: tensor contraction domain-specific framework | ||

Revision as of 20:44, June 5, 2015

| X-TUNE | |

|---|---|

| |

| Team Members | U. of Utah, ANL, LBNL, USC/ISI |

| PI | Mary Hall (U. of Utah) |

| Co-PIs | Paul Hovland (ANL), Samuel Williams (LBNL), Jacqueline Chame (USC/ISI) |

| Website | http://ctop.cs.utah.edu/x-tune/ |

| Download | {{{download}}} |

Autotuning for Exascale or X-TUNE - Self-Tuning Software to manage Heterogeneity

Team Members

- University of Utah: Mary Hall (Lead PI)

- Argonne National Laboratory (ANL): Paul Hovland, Stefan Wild, Krishna Narayanan, Jeff Hammond

- Lawrence Berkeley National Laboratory (LBNL): Sam Williams, Lenny Oliker, Brian van Straalen

- University of Southern California: Information Science Institute (USC/ISI): Jacqueline Chame

What is Autotuning?

Definition:

- Automatically generate a “search space” of possible implementations of a computation

- A code variant represents a unique implementation of a computation, among many

- A parameter represents a discrete set of values that govern code generation or execution of a variant

- Measure execution time and compare

- Select the best-performing implementation (for exascale, tradeoff between performance/energy/reliability)

Key Issues:

- Identifying the search space

- Pruning the search space to manage costs

- Off-line vs. on-line search

Three Types of Autotuning Systems

- Autotuning libraries

- Library that encapsulates knowledge of its performance under different execution environments

- Dense linear algebra: ATLAS, PhiPAC

- Sparse linear algebra: OSKI

- Signal processing: SPIRAL, FFTW

- Application-specific autotuning

- Active Harmony provides parallel rank order search for tunable parameters and variants

- Sequoia and PetaBricks provide language mechanism for expressing tunable parameters and variants

- Compiler-based autotuning

- Other examples: Saday et al., Swany et al., Eignenmann et al.

- Related concepts: iterative compilation, learning-based compilation

X-TUNE Goals

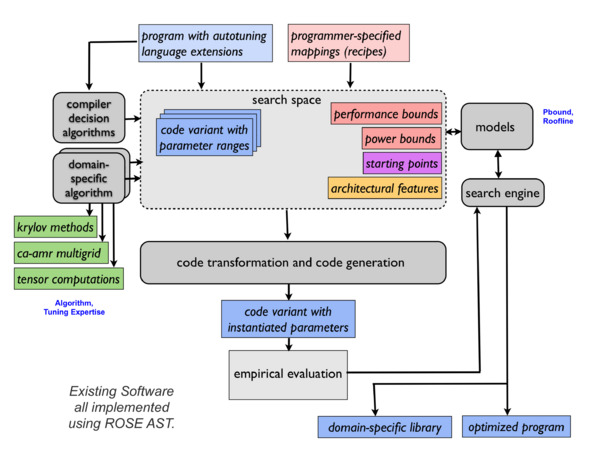

A unified autotuning framework that seamlessly integrates programmer-directed and compiler-directed autotuning

- Expert programmer and compiler work collaboratively to tune a code

- Unlike previous systems that place the burden on either programmer or compiler

- Provides access to compiler optimizations, offering expert programmers the control over optimization they so often desire

- Design autotuning to be encapsulated in domain-specific tools

- Enables less-sophisticated users of the software to reap the benefit of the expert programmers’ efforts

- Focus on Adaptive Mesh Refinement Multigrid (Combustion Co-Design Center, BoxLib, Chombo) and tensor contractions (TCE)

X-TUNE Structure

Autotuning Language Extensions

- Tunable Variables

- An annotation on the type of a variable (as in Sequoia)

- Additionally, specify range, constraints and a default value

- Computation Variants

- An annotation on the type of a function (as in PetaBricks)

- Additionally, specify (partial) selection criteria

- Multiple variants may be composed in the same execution

Separate mapping description captures architecture-specific aspects of autotuning.

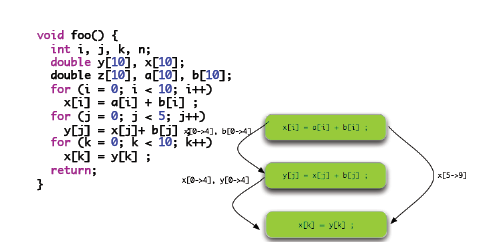

Compiler-Based Autotuning

- Foundational Concepts

- Identify search space through a high-level description that captures a large space of possible implementations

- Prune space through compiler domain knowledge and architecture features

- Provide access to programmers with transformation recipes, or recipes generated automatically by compiler decision algorithm

- Uses source-to-source transformation for portability, and to leverage vendor code generation

- Requires restructuring of the compiler

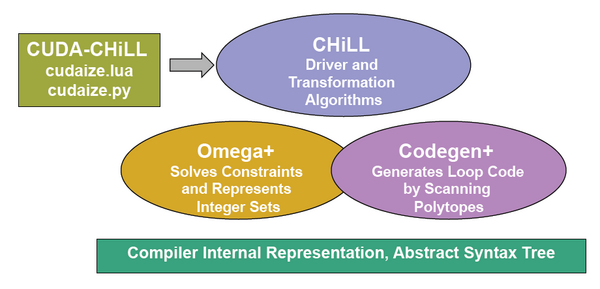

CHiLL Implementation

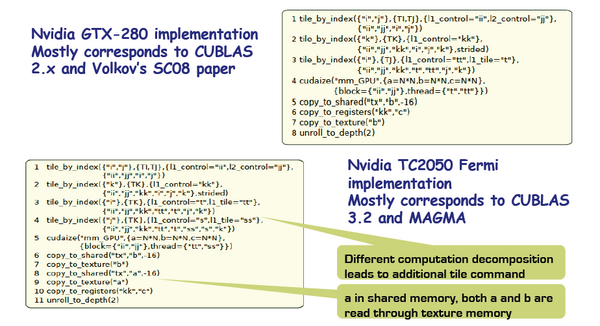

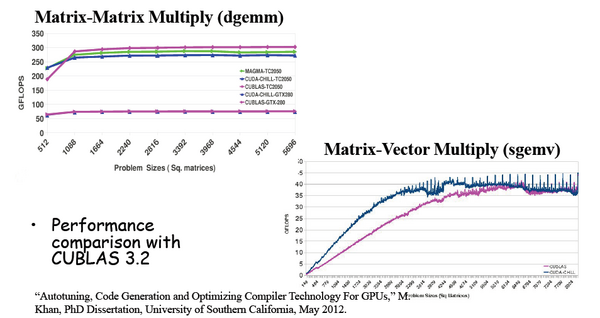

Transformation Recipes for Autotuning

Incorporate the Best Ideas from Manual Tuning

Compiler + Autotuning can yield comparable and even better performance than manually-tuned libraries

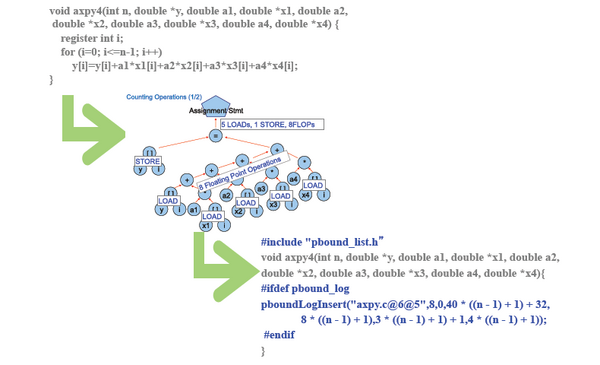

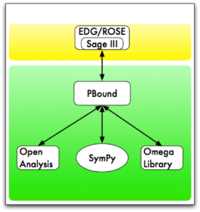

Pbound: Performance Modeling for Autotuning

- Performance modeling increases the automation in autotuning

- Manual transformation recipe generation is tedious and error-prone

- Implicit models are not portable across platforms

- Models can unify programmer guidance and compiler analysis

- Programmer can invoke integrated models to guide autotuning from application code

- Compiler can invoke models during decision algorithms

- Models optimize autotuning search

- Identify starting points

- Prune search space to focus on most promising solutions

- Provide feedback from updates in response to code modifications

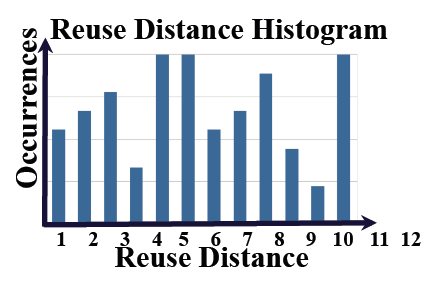

Reuse Distance and Cache Miss Prediction

Reuse distance

- For regular (affine) array references

- Compute reuse distance, to predict data footprints in memory hierarchy

- Guides transformation and data placement decisions

Cache miss prediction

- Use to predict misses

- Assuming fully associative cache with n lines (optimistic case), a reference will hit if the reuse distance d<n

Application Signatures + Architecture

How will modeling be used?

- Single-core and multicore models for application performance will combine architectural information, user-guidance, and application analysis

- Models will be coupled with decision algorithms to automatically generate CHiLL transformation recipes

- Input: Reuse Information, Loop Information etc.

- Output: Set of transformation scripts to be used by empirical search

- Feedback to be used to refine model parameters and behavior

- Small and large application execution times will be considered

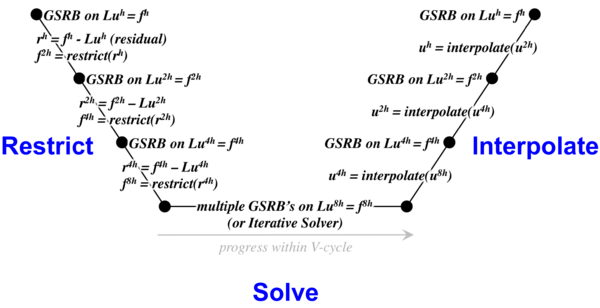

Example: Stencils and Multigrid

- Stencil performance bound, when bandwidth limited:

Performance (gflops) <= stencil flops * STREAM bandwidth / grid size

- Multigrid solves Au=f by calculating a number of corrections to an initial solution at varying grid coarsenings (“V-cycle”)

- Each level in the v-cycle: perform 1-4 relaxes (~stencil sweeps)

- Repeat multiple v-cycles reducing the norm of the residual by an order of magnitude each cycle

Multigrid and Adaptive Mesh Refinement

- Some regions of the domain may require finer fidelity than others

- In Adaptive Mesh Refinement, we refine those regions to a higher resolution in time and space

- Typically, one performs a multigrid “level solve” for one level (green, blue, red) at a time

- Coarse-fine boundaries (neighboring points can be at different resolutions) complicate the calculation of the RHS and ghost zones for the level

- Each level is a collection of small (323 or 643) boxes to minimize unnecessary work

- These boxes will be distributed across the machine for load balancing (neighbors are not obvious/implicit)

Autotuning for AMR Multigrid

- Focus is addressing data movement, multifaceted:

- Automate fusion of stencils within an operator. Doing so may entail aggregation of communication (deeper ghost zones)

- Extend and automate the communication-avoiding techniques developed in CACHE

- Automate application of data movement-friendly coarse-fine boundary conditions

- Automate hierarchical parallelism within a node to AMR MG codes

- Explore alternate data structures

- Explore alternate stencil algorithms (higher order, …)

- Proxy architectures: MIC, BG/Q, GPUs

- Encapsulate into an embedded DSL approach

Summary and Leverage

- Build integrated end-to-end autotuning, focused on AMR multigrid and tensor contractions

- Language and compiler guidance of autotuning

- Programmer and compiler collaborate to tune a code

- Modeling assists programmer, compiler writer, and search space pruning

- Leverage and integrate with other X-Stack teams

- Our compiler technology all based on ROSE so can leverage from and provide capability to ROSE

- Domain-specific technology to facilitate encapsulating our autotuning strategies

- Collaborate with MIT on autotuning interface

- Common run-time for a variety of platforms (e.g., GPUs and MIC), and supports a large number of potentially hierarchical threads

Products

- Publications

- T. Nelson, A. Rivera, M. Hall, P.D. Hovland, E. Jessup and B. Norris, "Generating Efficient Tensor Contractions for GPUs, International Conference on Parallel Processing, Sept., 2015.

- P. Basu, S. Williams, B. V. Straalen, M. Hall, L. Oliker, P. Colella, "Compiler-Directed Transformation for Higher-Order Stencils, International Parallel and Distributed Processing Symposium (IPDPS), 2015.

- A. Rivera. "Using Autotuning for Accelerating Tensor-Contraction on GPUs", Masters thesis, University of Utah, December 2014.

- P. Basu, S. Williams, B. V. Straalen, L. Oliker, M. Hall, ``Converting Stencils to Accumulations for Communication-Avoiding Optimization in Geometric Multigrid, Workshop on Stencil Computations (WOSC), 2014.

- P. Basu, A. Venkat, M. Hall, S. Williams, B. V. Straalen, L. Oliker, "Compiler generation and autotuning of communication-avoiding operators for geometric multigrid, 20th International Conference on High Performance Computing (HiPC), 2013.

- P. Basu, M. Hall, M. Khan, S. Maindola, S. Muralidharan, S. Ramalingam, A. Rivera, M. Shantharam, A. Venkat. "Towards Making Autotuning Mainstream. International Journal of High Performance Computing Applications, 27(4), November 2013.

- P. Basu, S. Williams, A. Venkat, B. Van Straalen, M. Hall, and L. Oliker. "Compiler generation and autotuning of communication-avoiding operators for geometric multigrid, In Workshop on Optimizing Stencil Computations (WOSC), 2013.

- Software Releases

- CHiLL and CUDA-CHiLL provide the autotuning compiler technology, and are available from http://github.com/CtopCsUtahEdu/chill-dev

- Orio manages navigation of the autotuning search space and is available from http://brnorris03.github.io/Orio

- Orio-CHiLL: new module in Orio provides integration with CHiLL and CUDA-CHiLL, and is available from http://github.com/brnorris03/Orio/tree/master/orio/module/chill

- SURF: new search algorithm module in Orio available from http://github.com/brnorris03/Orio/tree/master/orio/main/tuner/search/mlsearch

- TCR: domain-specific tensor contraction code generation and decision algorithm for GPUs available from http://github.com/axelyamel/tcg-autotuning

- Other Software Products

- miniGMG application proxy: extended to include high‐order stencil implementations

- CHiLL and CUDA-CHiLL: new domain-specific transformations incorporated

- Orio: new search algorithm incorporated and integration with CHiLL and CUDA-CHiLL

- NWCHEM kernels: representative tensor computations released

- PBound: new decision algorithm and integration with CHiLL

- OCTOPI: tensor contraction domain-specific framework