CORVETTE: Difference between revisions

From Modelado Foundation

imported>Ksen |

imported>Ksen |

||

| Line 57: | Line 57: | ||

== Publications == | == Publications == | ||

* ''Fast Reproducible Floating-Point Summation''. ARITH'13. | * ''Fast Reproducible Floating-Point Summation''. J. Demmel and H.D. Nguyen. In Proceedings of the 21st IEEE Symposium on Computer Arithmetic (ARITH'13), Austin, TX, April 2013. | ||

* | * ''Numerical Accuracy and Reproducibility at ExaScale''. J. Demmel and H.D. Nguyen. In Proceedings of the 21st IEEE Symposium on Computer Arithmetic (ARITH'13), Austin, TX, April 2013. | ||

* "Scaling Data Race Detection for Partitioned Global Address Space Programs". ICS'13. | * "Scaling Data Race Detection for Partitioned Global Address Space Programs". ICS'13. | ||

* "Precimonious: Tuning Assistant for Floating-Point Precision". SC'13. | * "Precimonious: Tuning Assistant for Floating-Point Precision". SC'13. | ||

Revision as of 15:36, April 25, 2014

| CORVETTE | |

|---|---|

| |

| Team Members | UC Berkeley, LBNL |

| PI | Koushik Sen (UC Berkeley) |

| Co-PIs | James W. Demmel (UC Berkeley), Costin Iancu (LBNL) |

| Website | CORVETTE |

| Download | {{{download}}} |

Program Correctness, Verification, and Testing for Exascale or CORVETTE

Team Members

Researchers

Postdoctoral Researchers

Graduate Student Researcher

Motivation

- High performance scientific computing

- Exascale: O(106) nodes, O(103) cores per node

- Requires asynchrony and “relaxed” memory consistency

- Shared memory with dynamic task parallelism

- Languages allow remote memory modification

- Correctness challenges

- Non-deterministic causes hard to diagnose correctness and performance bugs

- Data races, atomicity violations, deadlocks …

- Bugs in DSL

- Scientific applications use floating-points: non-determinism leads to non-reproducible results

- Numerical exceptions can cause rare but critical bugs that are hard for non-experts to detect and fix

- Non-deterministic causes hard to diagnose correctness and performance bugs

Goals

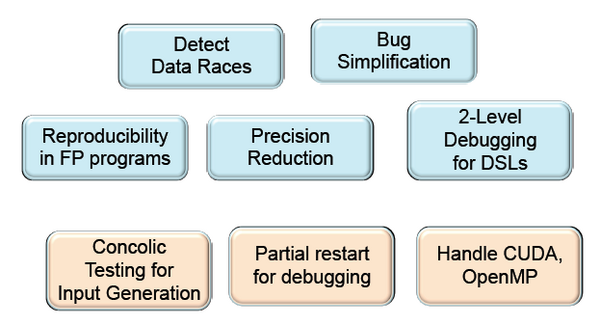

Develop correctness tools for different programming models: PGAS, MPI, dynamic parallelism

I. Testing and Verification

- Identify sources of non-determinism in executions

- Data races, atomicity violations, non‐reproducible floating point results

- Explore state-of-the-art techniques that use dynamic analysis

- Develop precise and scalable tools: < 2X overhead

II. Debugging

- Use minimal amount of concurrency to reproduce bug

- Support two-level debugging of high-level abstractions

- Detect causes of floating-point anomalies and determine the minimum precision needed to fix them

Publications

- Fast Reproducible Floating-Point Summation. J. Demmel and H.D. Nguyen. In Proceedings of the 21st IEEE Symposium on Computer Arithmetic (ARITH'13), Austin, TX, April 2013.

- Numerical Accuracy and Reproducibility at ExaScale. J. Demmel and H.D. Nguyen. In Proceedings of the 21st IEEE Symposium on Computer Arithmetic (ARITH'13), Austin, TX, April 2013.

- "Scaling Data Race Detection for Partitioned Global Address Space Programs". ICS'13.

- "Precimonious: Tuning Assistant for Floating-Point Precision". SC'13.

- "Parallel Reproducible Summation". To appear in IEEE Transaction on Computer, Special Section on Computer Arithmetic 2014.

Technical Presentations

Software Releases

Testing and Verification Tools

Scalable Testing of Parallel Programs

- Concurrent Programming is hard

- Bugs happen non‐deterministically

- Data races, deadlocks, atomicity violations, etc.

- Goals: build a tool to test and debug concurrent and parallel programs

- Efficient: reduce overhead from 10x‐100x to 2x

- Precise

- Reproducible

- Scalable

- Active random testing

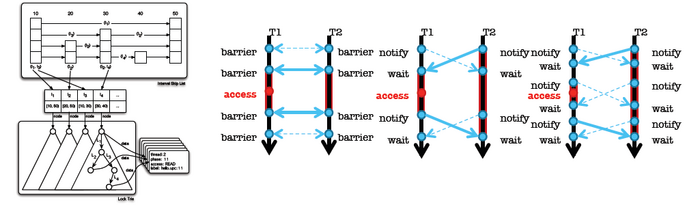

Active Testing

- Phase 1: Static or dynamic analysis to find potential concurrency bug patterns, such as data races, deadlocks, atomicity violations

- Data races: Eraser or lockset based [PLDI’08]

- Atomicity violations: cycle in transactions and happens‐before relation [FSE’08]

- Deadlocks: cycle in resource acquisition graph [PLDI’09]

- Publicly available tool for Java/Pthreads/UPC [CAV’09]

- Memory model bugs: cycle in happens‐before graph [ISSTA’11]

- For UPC programs running on thousands of cores [SC’11]

- Phase 2: “Direct” testing (or model checking) based on the bug patterns obtained from phase 1

- Confirm bugs

Goals

- Goal 1. Nice to have a trace exhibiting the data race

- Goal 2. Nice to have a trace exhibiting the assertion failure

- Goal 3. Nice to have a trace with fewer threads

- Goal 4. Nice to have a trace with fewer context switches

Challenges for Exascale

- Java and pthreads programs

- Synchronization with locks and condition variables

- Single node

- Exascale has different programming models

- Large scale

- Bulk communication

- Collective operations with data movement

- Memory consistency

- Distributed shared memory

- Cannot use centralized dynamic analyses

- Cannot instrument and track every statement

Further Challenges

- Targeted a simple programming paradigm

- Threads and shared memory

- Similar techniques are available for MPI and CUDA

- ISP, DAMPI, MARMOT, Umpire, MessageChecker

- TASS uses symbolic execu7on

- PUG for CUDA

- Analyze programs that mix different paradigms

- OpenMP, MPI, Shared Distributed Memory

- Need to correlate non‐determinism across paradigms

How Well Does it Scale?

- Maximum 8% slowdown at 8K cores

- Franklin Cray XT4 Supercomputer at NERSC

- Quad‐core 2.x3GHz CPU and 8GB RAM per node

- Portals interconnect

- Optimizations for scalability

- Efficient Data Structures

- Minimize Communication

- Sampling with Exponential Backoff

Debugging Tools

Debugging Project I

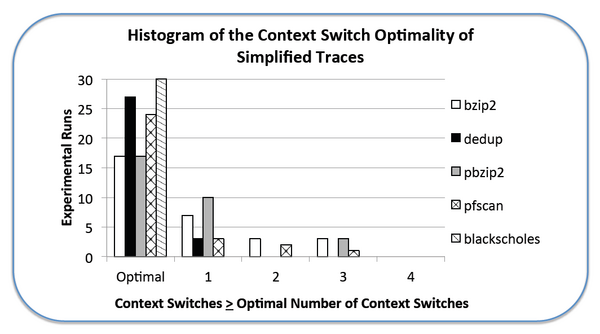

- Detect bug with fewer threads and fewer context switches

Experience with C/PThreads: Over 90% of simplified traces were within 2 context switches of optimal

Small model hypothesis for parallel programs

- Most bugs can be found with few threads

- 2‐3 threads

- No need to run on thousands of nodes

- Most bugs can be found with fewer context switches [Musuvathi and Qadeer, PLDI 07]

- Helps in sequential debugging

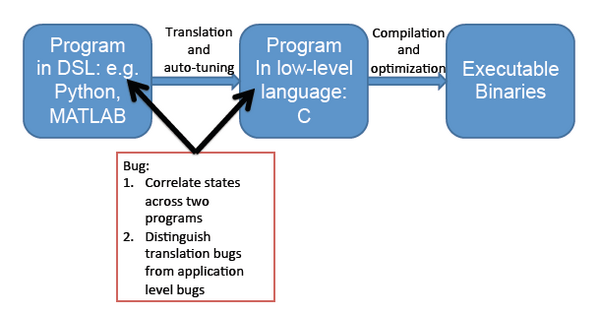

Debugging Project II

- Two‐level debugging of DSLs

- Correlate program state across program versions

Debugging Project III

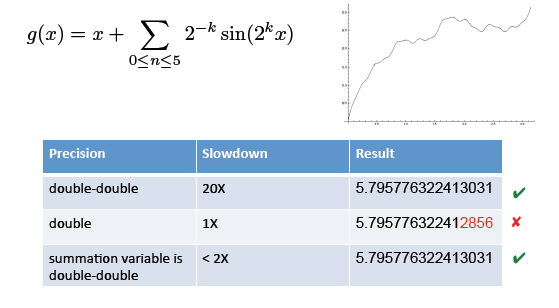

- Find floating point anomalies

- Recommend safe reduction of precision

Floating Point Debugging

Why do we care?

- Usage of floating point programs has been growing rapidly

- HPC

- Cloud, games, graphics, finance, speech, signal processing

- Most programmers are not expert in floating‐point!

- Why not use highest precision everywhere

- High precision wastes

- Energy

- Time

- Storage

Floating Point Debugging Problem 1: Reduce unnecessary precision

- Consider the problem of finding the arc length of the function

How can we find a minimal set of code fragments whose precision must be high?

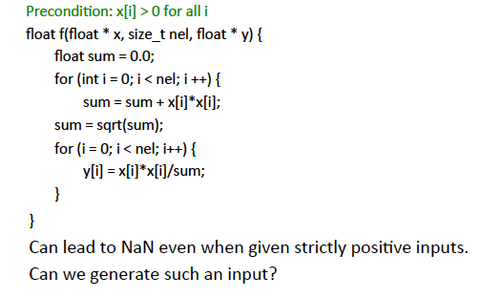

Floating Point Debugging Problem 2: Detect Inaccuracy and Anomaly

What can we do?

- We can reduce precision “safely”

- reduce power, improve performance, get better answer

- Automated testing and debugging techniques

- To recommend “precision reduction”

- Formal proof of “safety” can be replaced by Concolic testing

- Approach: automate previously hand-made debugging

- Concolic testing

- Delta debugging [Zeller et al.]

Implementation

- Prototype implementation for C programs

- Uses CIL compiler framework

- http://perso.univ-perp.fr/guillaume.revy/index.php?page=debugging

- Future plans

- Build on top of LLVM compiler framework

Potential Collaboration

- Dynamic analyses to find bugs ‐ dynamic parallelism, unstructured parallelism, shared memory

- DEGAS, XPRESS, Traleika Glacier

- Floating point debugging

- Co‐design centers

- 2‐level debugging

- D-TEC

Summary

- Build testing tools

- Close to what programmers use

- Hide formal methods and program analysis under testing

- If you are not obsessed with formal correctness

- Testing and debugging can help you solve these problems with high confidence