HPX-5: Difference between revisions

From Modelado Foundation

imported>Jayaajay No edit summary |

imported>Jayaajay No edit summary |

||

| Line 2: | Line 2: | ||

== Announcement == | == Announcement == | ||

'''''HPX-5 version | '''''Indiana University announces HPX-5 version 4.0 runtime system software!''''' | ||

The Center for Research in Extreme Scale Technologies (CREST) at Indiana University is pleased to announce | The Center for Research in Extreme Scale Technologies (CREST) at Indiana University is pleased to announce the release of version 4.0 of HPX-5, a state-of-the-art runtime system for extreme-scale computing. Version 4.0 of the HPX-5 runtime system represents a significant maturation of the sequence of HPX-5 releases to date for efficient scalable general purpose high performance computing. It incorporates new optimization for performance, features associated with the ParalleX execution model, and programmer services including C++ bindings and collectives. | ||

HPX-5 is a | HPX-5 is a realization of the ParalleX execution model, which establishes the runtime's roles and responsibilities with respect to other interoperating system layers, and explicitly includes a performance model that provides an analytic framework for performance and optimization. As an Asynchronous Multi-Tasking (AMT) software system, HPX-5 is event-driven, enabling the migration of continuations and the movement of work to data, when appropriate, based on sophisticated local control synchronization objects (e.g., futures, dataflow) and active messages. ParalleX compute complexes, embodied as lightweight, first-class threads, can block, perform global mutable side-effects, employ non-strict firing rules, and serve as continuations. HPX-5 employs an active global address space in which virtually addressed objects can migrate across the physical system without changing address. First-class named processes can span and share nodes. | ||

HPX-5 is an evolving runtime system used both to enable dynamic adaptive parallel applications and to conduct path-finding experimentation to quantify effects of latency, overhead, contention, and parallelism of its integral mechanisms. These performance parameters determine a trade-off space within which dynamic control is performed for best performance. It is an area of active research driven by complex applications and advances in HPC architecture. HPX-5 employs dynamic and adaptive resource management and task scheduling to achieve the significant improvements in efficiency and scalability necessary to deploy many classes of parallel applications on the largest (current and future) supercomputers in the nation and world. Although still under development, HPX-5 is portable to a diverse set of systems, is reliable and programmable, scales across multi-core and multi-node systems, and delivers efficiency improvements for irregular, time-varying problems. | |||

HPX-5 is written primarily in portable C99 and is released under an open source BSD license. Future major releases will be delivered semi-annually, and correctness and performance bug fixes will be made available as required. To support active engagement with the larger developer community, active development branches are available. HPX-5 will also be disseminated through the OpenHPC consortium led by the Linux Foundation. | |||

HPX-5 | |||

{{Infobox project | {{Infobox project | ||

| Line 22: | Line 18: | ||

| imagecaption = | | imagecaption = | ||

| download = http://hpx.crest.iu.edu/download | | download = http://hpx.crest.iu.edu/download | ||

| team-members = Thomas Sterling, Andrew Lumsdaine, Kelsey Shephard, Jayashree | | team-members = Thomas Sterling, Andrew Lumsdaine, Kelsey Shephard, Jayashree Candadai, Matt Anderson, Luke Dalessandro, Daniel Kogler, Abhishek Kulkarni | ||

| pi = Ron Brightwell, Sandia | | pi = Ron Brightwell, Sandia | ||

| co-pi = Andrew Lumsdaine | | co-pi = Andrew Lumsdaine | ||

}} | }} | ||

== Audience == | == Audience == | ||

| Line 36: | Line 29: | ||

== Features in HPX-5 == | == Features in HPX-5 == | ||

* Fine grained execution through | * Fine grained execution through blockable lightweight threads and unified access to a global address space. | ||

* High-performance PGAS implementation which supports low-level one-sided operations and two-sided active messages with continuations, and an experimental AGAS option that allows the binding of global to physical addresses to vary dynamically. | * High-performance PGAS implementation which supports low-level one-sided operations and two-sided active messages with continuations, and an experimental AGAS option with active load balancing that allows the binding of global to physical addresses to vary dynamically. | ||

* Makes concurrency manageable with globally allocated lightweight control objects (LCOs) based synchronization (futures, gates, reductions) allowing thread execution or parcel instantiation to wait for events without execution resource consumption. | * Makes concurrency manageable with globally allocated lightweight control objects (LCOs) based synchronization (futures, gates, reductions, dataflow) allowing thread execution or parcel instantiation to wait for events without execution resource consumption. | ||

* Higher level abstractions including asynchronous remote-procedure-call options, data parallel loop constructs, and system abstractions. | * Higher level abstractions including asynchronous remote-procedure-call options, data parallel loop constructs, and system abstractions like timers. | ||

* | * Implementation of ParalleX processes providing programmers with termination detection and per-process collectives. | ||

* Photon networking library synthesizing RDMA-with-remote-completion directly on top of uGNI, IB verbs, or libfabric. For portability and legacy support, HPX-5 emulates RDMA-with-remote-completion using MPI point-to-point messaging. | * Photon networking library synthesizing RDMA-with-remote-completion directly on top of uGNI, IB verbs, or libfabric. For portability and legacy support, HPX-5 emulates RDMA-with-remote-completion using MPI point-to-point messaging. | ||

* | * Programmer services including C++ bindings and collectives (prototype non-blocking network collectives for hierarchical process collective operation). | ||

* PAPI support for profiling. APEX policy engine (Autonomic Performance Environment for eXascale) support for runtime adaption. | * Leverages distributed GPU and co-processors (Intel Xeon Phi) through experimental OpenCL support. | ||

* Migration of legacy applications through easy interoperability and co-existence with traditional runtimes like MPI. HPX-5 | * PAPI support for profiling. | ||

* Integration with APEX policy engine (Autonomic Performance Environment for eXascale) support for runtime adaption, RCR and LXK OS. | |||

* Migration of legacy applications through easy interoperability and co-existence with traditional runtimes like MPI. HPX-5 4.0 is also released along with several applications: LULESH, Wavelet AMR, HPCG, CoMD and the ParalleX Graph Library. | |||

== Timeline == | == Timeline == | ||

| Line 52: | Line 47: | ||

* v1.0.0 released on 3rd May 2015. | * v1.0.0 released on 3rd May 2015. | ||

* v2.0.0 released on 17th November 2015. | * v2.0.0 released on 17th November 2015. | ||

* v3.0.0 is scheduled to be released in | * v3.0.0 released on 5th May 2016. | ||

* v4.0.0 released on 11th November 2016. | |||

* v5.0.0 is scheduled to be released in May 2017. | |||

HPX-5 is developed with an agile process that includes continuous integration, regular point releases, and frequent regression tests for correctness and performance. Users can submit issue reports to the development team through the HPX-5 web site(https://gitlab.crest.iu.edu/extreme/hpx/issues). Future major releases of HPX-5 will be delivered semi-annually although bug fixes will be made available between major releases. | HPX-5 is developed with an agile process that includes continuous integration, regular point releases, and frequent regression tests for correctness and performance. Users can submit issue reports to the development team through the HPX-5 web site(https://gitlab.crest.iu.edu/extreme/hpx/issues). Future major releases of HPX-5 will be delivered semi-annually although bug fixes will be made available between major releases. | ||

| Line 60: | Line 57: | ||

== Quick Start Instructions == | == Quick Start Instructions == | ||

If you plan to use HPX–5, we suggest to start with the latest released version (currently HPX–5 | If you plan to use HPX–5, we suggest to start with the latest released version (currently HPX–5 v4.0.0) which can be downloaded from https://hpx.crest.iu.edu/download. | ||

Follow the installation directions located under hpx/INSTALL | Follow the installation directions located under hpx/INSTALL | ||

Latest revision as of 19:42, November 28, 2016

Announcement

Indiana University announces HPX-5 version 4.0 runtime system software!

The Center for Research in Extreme Scale Technologies (CREST) at Indiana University is pleased to announce the release of version 4.0 of HPX-5, a state-of-the-art runtime system for extreme-scale computing. Version 4.0 of the HPX-5 runtime system represents a significant maturation of the sequence of HPX-5 releases to date for efficient scalable general purpose high performance computing. It incorporates new optimization for performance, features associated with the ParalleX execution model, and programmer services including C++ bindings and collectives.

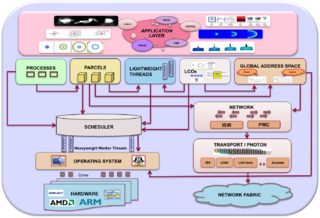

HPX-5 is a realization of the ParalleX execution model, which establishes the runtime's roles and responsibilities with respect to other interoperating system layers, and explicitly includes a performance model that provides an analytic framework for performance and optimization. As an Asynchronous Multi-Tasking (AMT) software system, HPX-5 is event-driven, enabling the migration of continuations and the movement of work to data, when appropriate, based on sophisticated local control synchronization objects (e.g., futures, dataflow) and active messages. ParalleX compute complexes, embodied as lightweight, first-class threads, can block, perform global mutable side-effects, employ non-strict firing rules, and serve as continuations. HPX-5 employs an active global address space in which virtually addressed objects can migrate across the physical system without changing address. First-class named processes can span and share nodes.

HPX-5 is an evolving runtime system used both to enable dynamic adaptive parallel applications and to conduct path-finding experimentation to quantify effects of latency, overhead, contention, and parallelism of its integral mechanisms. These performance parameters determine a trade-off space within which dynamic control is performed for best performance. It is an area of active research driven by complex applications and advances in HPC architecture. HPX-5 employs dynamic and adaptive resource management and task scheduling to achieve the significant improvements in efficiency and scalability necessary to deploy many classes of parallel applications on the largest (current and future) supercomputers in the nation and world. Although still under development, HPX-5 is portable to a diverse set of systems, is reliable and programmable, scales across multi-core and multi-node systems, and delivers efficiency improvements for irregular, time-varying problems.

HPX-5 is written primarily in portable C99 and is released under an open source BSD license. Future major releases will be delivered semi-annually, and correctness and performance bug fixes will be made available as required. To support active engagement with the larger developer community, active development branches are available. HPX-5 will also be disseminated through the OpenHPC consortium led by the Linux Foundation.

| HPX-5 Architecture | |

|---|---|

| |

| Team Members | Thomas Sterling, Andrew Lumsdaine, Kelsey Shephard, Jayashree Candadai, Matt Anderson, Luke Dalessandro, Daniel Kogler, Abhishek Kulkarni |

| PI | Ron Brightwell, Sandia |

| Co-PIs | Andrew Lumsdaine |

| Website | http://hpx.crest.iu.edu |

| Download | http://hpx.crest.iu.edu/download |

Audience

HPX-5 is used for a broad range of scientific applications, helping scientists and developers write code that shows better performance on irregular applications and at scale when compared to more conventional programming models such as MPI. For the application developer, it provides dynamic adaptive resource management and task scheduling to reach otherwise unachievable efficiencies in time and energy and scalability. HPX-5 supports such applications with implementation of features like Active Global Address Space (AGAS), ParalleX Processes, Complexes (ParalleX Threads and Thread Management), Parcel Transport and Parcel Management, Local Control Objects (LCOs) and Localities. Fine-grained computation is expressed using actions. Computation is logically grouped into processes to provide quiescence and termination detection. LCOs are synchronization objects that manage local and distributed control flow and have a global address. The heart of HPX-5 is a lightweight thread scheduler that directly schedules lightweight actions by multiplexing them on a set of heavyweight scheduler threads.

Features in HPX-5

- Fine grained execution through blockable lightweight threads and unified access to a global address space.

- High-performance PGAS implementation which supports low-level one-sided operations and two-sided active messages with continuations, and an experimental AGAS option with active load balancing that allows the binding of global to physical addresses to vary dynamically.

- Makes concurrency manageable with globally allocated lightweight control objects (LCOs) based synchronization (futures, gates, reductions, dataflow) allowing thread execution or parcel instantiation to wait for events without execution resource consumption.

- Higher level abstractions including asynchronous remote-procedure-call options, data parallel loop constructs, and system abstractions like timers.

- Implementation of ParalleX processes providing programmers with termination detection and per-process collectives.

- Photon networking library synthesizing RDMA-with-remote-completion directly on top of uGNI, IB verbs, or libfabric. For portability and legacy support, HPX-5 emulates RDMA-with-remote-completion using MPI point-to-point messaging.

- Programmer services including C++ bindings and collectives (prototype non-blocking network collectives for hierarchical process collective operation).

- Leverages distributed GPU and co-processors (Intel Xeon Phi) through experimental OpenCL support.

- PAPI support for profiling.

- Integration with APEX policy engine (Autonomic Performance Environment for eXascale) support for runtime adaption, RCR and LXK OS.

- Migration of legacy applications through easy interoperability and co-existence with traditional runtimes like MPI. HPX-5 4.0 is also released along with several applications: LULESH, Wavelet AMR, HPCG, CoMD and the ParalleX Graph Library.

Timeline

The HPX-5 source code is distributed with a liberal open-source license.

- v1.0.0 released on 3rd May 2015.

- v2.0.0 released on 17th November 2015.

- v3.0.0 released on 5th May 2016.

- v4.0.0 released on 11th November 2016.

- v5.0.0 is scheduled to be released in May 2017.

HPX-5 is developed with an agile process that includes continuous integration, regular point releases, and frequent regression tests for correctness and performance. Users can submit issue reports to the development team through the HPX-5 web site(https://gitlab.crest.iu.edu/extreme/hpx/issues). Future major releases of HPX-5 will be delivered semi-annually although bug fixes will be made available between major releases.

The HPX-5 source code is released under the BSD open-source license and is distributed with a complete set of tests along with selected sample applications. HPX-5 is funded and supported by the DoD, DoE, and NSF, and is used actively in projects such as PSAAP, XPRESS, XSEDE. Further information and downloads for HPX-5 can be found at http://hpx.crest.iu.edu.

Quick Start Instructions

If you plan to use HPX–5, we suggest to start with the latest released version (currently HPX–5 v4.0.0) which can be downloaded from https://hpx.crest.iu.edu/download. Follow the installation directions located under hpx/INSTALL

Links

- Webpage: http://hpx.crest.iu.edu

- HPX-5 Documentation (http://hpx.crest.iu.edu/documentation). User guide and developers guide are online, along with HPX-5 developers policy

- HPX-5 tutorial is available at (http://hpx.crest.iu.edu/tutorials).

- HPX-5 Source code (http://hpx.crest.iu.edu/git_repository) The HPX-5 source code is available as a git repository to be cloned.

- Applications (http://hpx.crest.iu.edu/applications).

- Frequently asked questions is available at (http://hpx.crest.iu.edu/faqs_and_tutorials).

- Mailing lists are available here: http://hpx.crest.iu.edu/contact.