DEGAS: Difference between revisions

From Modelado Foundation

imported>Cdenny No edit summary |

imported>Cdenny No edit summary |

||

| Line 23: | Line 23: | ||

== Mission == | == Mission == | ||

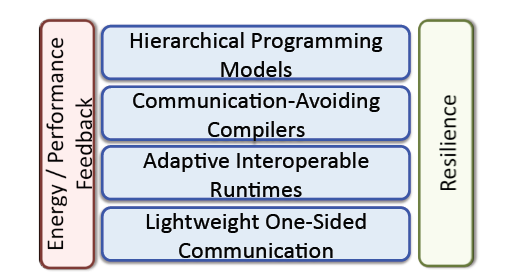

'''Mission Statement:''' To ensure the broad success of Exascale systems through a unified programming model that is productive, scalable, portable, and interoperable, and meets the unique Exascale demands of energy efficiency and resilience. | '''Mission Statement:''' To ensure the broad success of Exascale systems through a unified programming model that is productive, scalable, portable, and interoperable, and meets the unique Exascale demands of energy efficiency and resilience. | ||

[[File:DEGAS-Mission.png]] | |||

== Goals & Objectives == | == Goals & Objectives == | ||

| Line 31: | Line 33: | ||

* '''Energy Efficiency:''' Avoid communication, which will dominate energy costs, and adapt to performance heterogeneity due to system-‐level energy management | * '''Energy Efficiency:''' Avoid communication, which will dominate energy costs, and adapt to performance heterogeneity due to system-‐level energy management | ||

* '''Interoperability:''' Encourage use of languages and features through incremental adoption | * '''Interoperability:''' Encourage use of languages and features through incremental adoption | ||

| Line 43: | Line 38: | ||

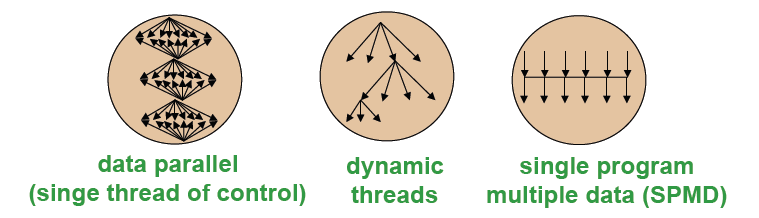

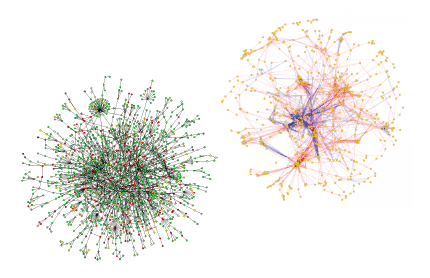

=== Two Distinct Parallel Programming Questions === | === Two Distinct Parallel Programming Questions === | ||

* What is the parallel control model? | * What is the parallel control model? | ||

[[File: | [[File:DEGAS-Parallel-Control-Model.png]] | ||

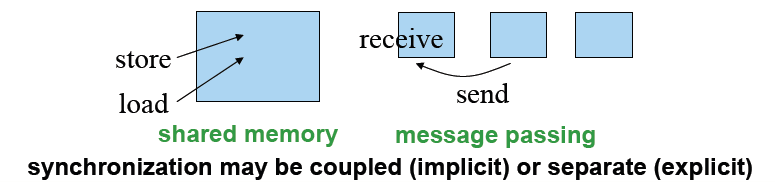

* What is the model for sharing/communication? | * What is the model for sharing/communication? | ||

[[File: | [[File:DEGAS-Sharing-Model.png]] | ||

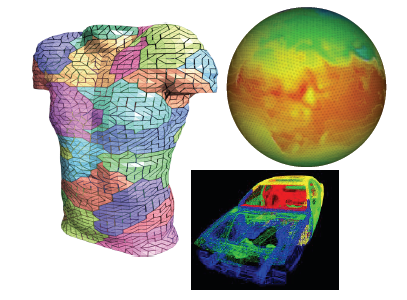

=== Applications Drive New Programming Models | === Applications Drive New Programming Models === | ||

* Message Passing Programming | * Message Passing Programming | ||

** Divide up domain in pieces | ** Divide up domain in pieces | ||

** Compute one piece and exchange | ** Compute one piece and exchange | ||

** '''MPI and many libraries''' | ** '''MPI and many libraries''' | ||

[[File:DEGAS-Message-Passing.png]] | |||

* Global Address Space Programming | * Global Address Space Programming | ||

| Line 58: | Line 54: | ||

** Grab whatever/whenever | ** Grab whatever/whenever | ||

** '''UPC, CAF, X10, Chapel, Fortress, Titanium, GlobalArrays''' | ** '''UPC, CAF, X10, Chapel, Fortress, Titanium, GlobalArrays''' | ||

[[File:DEGAS-Global-Address-Space.png]] | |||

=== Hierarchical Programming Model === | === Hierarchical Programming Model === | ||

[[File:DEGAS- | [[File:DEGAS-Hierarchical-PM.png|right]] | ||

* Goal: Programmability of exascale applications while providing scalability, locality, energy efficiency, resilience, and portability | * '''Goal:''' Programmability of exascale applications while providing scalability, locality, energy efficiency, resilience, and portability | ||

** ''Implicit constructs:'' parallel multidimensional loops, global distributed data structures, adaptation for performance heterogeneity | ** ''Implicit constructs:'' parallel multidimensional loops, global distributed data structures, adaptation for performance heterogeneity | ||

** ''Explicit constructs:'' asynchronous tasks, phaser synchronization, locality | ** ''Explicit constructs:'' asynchronous tasks, phaser synchronization, locality | ||

| Line 83: | Line 80: | ||

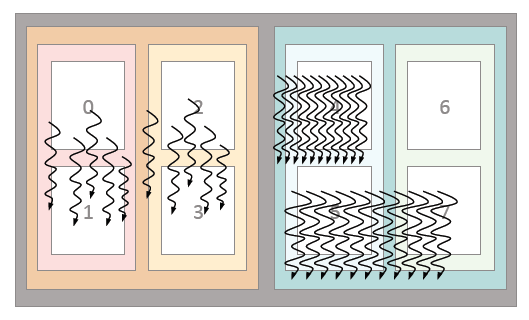

=== Communication-Avoiding Compilers === | === Communication-Avoiding Compilers === | ||

[[File:DEGAS-Communication-Node.png|right]] | |||

* '''Goal:''' massive parallelism, deep memory and network hierarchies, plus functional and performance heterogeneity | |||

** '''Fine‐grained task and data parallelism:''' enable performance portability | |||

** '''Heterogeneity:''' guided by functional, energy and performance characteristics | |||

** '''Energy efficiency:''' minimize data movement and hooks to runtime adaptation | |||

** '''Programmability:''' manage details of memory, heterogeneity, and containment | |||

** '''Scalability:''' communication and synchronization hiding through asynchrony | |||

* H-PGAS into the Node | |||

** Communication is all data movement | |||

* Build on code‐generation infrastructure | |||

** ROSE for H‐CAF and Communication‐Avoidance optimizations | |||

** BUPC and Habanero‐C; Zoltan | |||

** Additional theory of CA code generation | |||

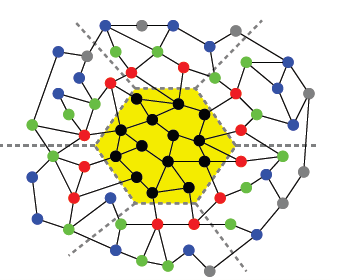

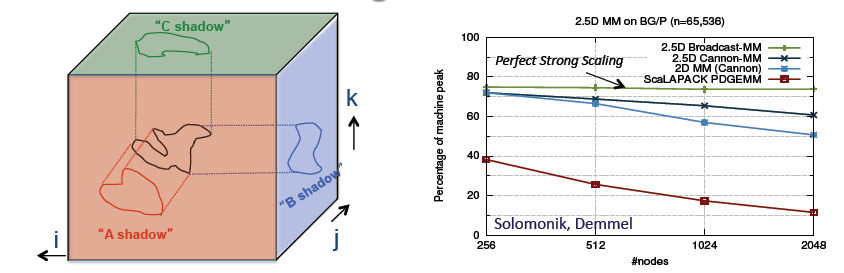

=== Exascale Programming: Support for Future Algorithms === | |||

[[File:DEGAS-Algorithm.png]] | |||

* '''Approach:''' “Rethink” algorithms to optimize for data movement | |||

** New class of communication‐optimal algorithms | |||

** Most codes are not bandwidth limited, but many should be | |||

* '''Challenges:''' How general are these algorithms? | |||

** Can they be automated and for what types of loops? | |||

** How much benefit is there in practice? | |||

=== Adaptive Runtime Systems (ARTS) === | |||

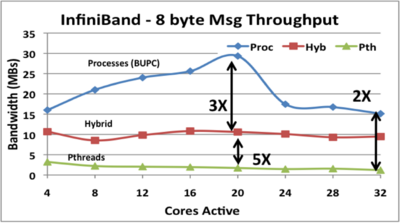

[[File:DEGAS-Infiniband-Throughput.png|right|400px]] | |||

* '''Goal:''' Adaptive runtime for manycore systems that are hierarchical, heterogeneous and provide asymmetric performance | |||

** '''Reactive and proactive control:''' for utilization and energy efficiency | |||

** '''Integrated tasking and communication:''' for hybrid programming | |||

** '''Sharing of hardware threads:''' required for library interoperability | |||

* '''Novelty:''' Scalable control; integrated tasking with communication | |||

** '''Adaptation:''' Runtime annotated with performance history/intentions | |||

** '''Performance models:''' Guide runtime optimizations, specialization | |||

** '''Hierarchical:''' Resource/energy | |||

** '''Tunable control:''' Locality/load balance | |||

* '''Leverages:''' Existing runtimes | |||

** '''Lithe''' scheduler composition; '''Juggle''' | |||

** '''BUPC and Habanero‐C''' runtimes | |||

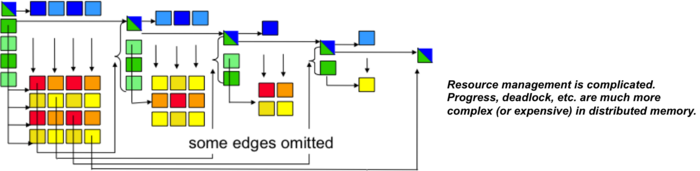

=== Synchronization Avoidance vs Resource Management === | |||

[[File:DEGAS-Resource-Mgmt.png|700px]] | |||

* Management of critical resources will be more important: | |||

** ''Memory and network bandwidth limited'' by cost and energy | |||

** ''Capacity limited at many levels:'' network buffers at interfaces, internal network congestion are real and growing problems | |||

* Can runtimes manage these or do users need to help? | |||

** Adaptation based on history and (user‐supplied) intent? | |||

** Where will bottlenecks be for a given architecture and application? | |||

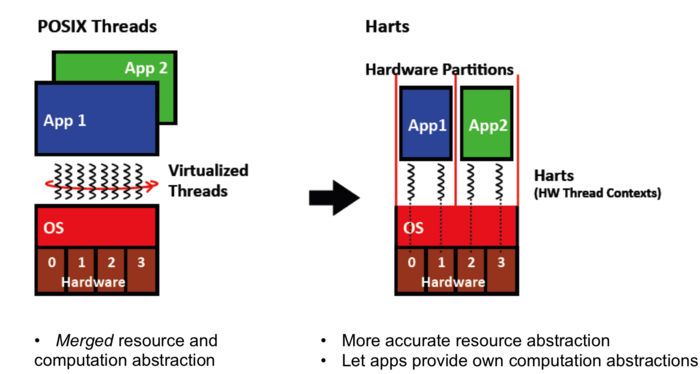

=== Lith Scheduling Abstraction: "Harts" (Hardware Threads) === | |||

[[File:DEGAS-Harts.png|700px]] | |||

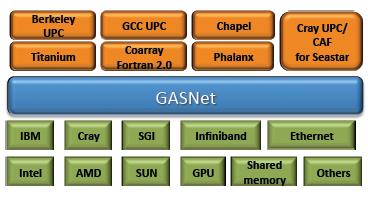

=== Lightweight Communication (GASNet-EX) === | |||

[[File:DEGAS-GASNet.png|right]] | |||

* '''Goal:''' Maximize bandwidth use with lightweight communication | |||

** '''One‐sided communication:''' to avoid over‐synchronization | |||

** '''Active‐Messages:''' for productivity and portability | |||

** '''Interoperability:''' with MPI and threading layers | |||

* '''Novelty:''' | |||

** Congestion management: for 1‐sided communication with ARTS | |||

** Hierarchical: communication management for H‐PGAS | |||

** Resilience: globally consist states and fine‐grained fault recovery | |||

** Progress: new models for scalability and interoperatbility | |||

* '''Leverage GASNet''' (redesigned): | |||

** Major changes for on‐chip interconnects | |||

** Each network has unique opportunities | |||

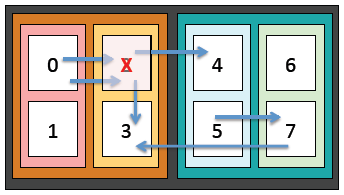

=== Resilience through Containment Domains === | |||

[[File:DEGAS-Resilience.png|right]] | |||

* '''Goal:''' Provide a resilient runtime for PGAS applications | |||

** Applications should be able to customize resilience to their needs | |||

** Resilient runtime that provides easy‐to‐use mechanisms | |||

* '''Novelty:''' Single analyzable abstraction for resilience | |||

** PGAS Resilience consistency model | |||

** Directed and hierarchical preservation | |||

** Global or localized recovery | |||

** Algorithm and system‐specific detection, elision, and recovery | |||

* '''Leverage:''' Combined superset of prior approaches | |||

** Fast checkpoints for large bulk updates | |||

** Journal for small frequent updates | |||

** Hierarchical checkpoint‐restart | |||

** OS‐level save and restore | |||

** Distributed recovery | |||

'''Resilience: Research Questions''' | |||

1. How to define consistent (i.e. allowable) states in the PGAS model? | |||

* Theory well understood for fail‐stop message‐passing, but not PGAS. | |||

2. How do we discover consistent states once we've defined them? | |||

* Containment domains offer a new approach, beyond conventional sync-and‐stop algorithms. | |||

3. How do we reconstruct consistent states after a failure? | |||

* Explore low overhead techniques that minimize effort required by applications programmers. | |||

* Leverage BLCR, GASnet, Berkeley UPC for development, and use Containment Domains as prototype API for requirements discovery | |||

[[File:DEGAS-Resilience-Research-Area.png|300px]] | |||

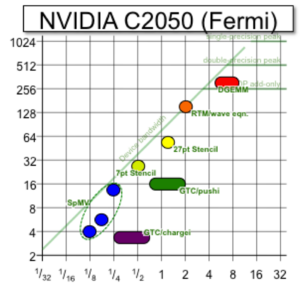

=== Energy and Performance Feedback === | |||

[[File:DEGAS-Nvidia-graph.png|right|300px]] | |||

* '''Goal:''' Monitoring and feedback of performance and energy for online and offline optimization | |||

** Collect and distill: performance/energy/timing data | |||

** Identify and report bottlenecks: through summarization/visualization | |||

** Provide mechanisms: for autonomous runtime adaptation | |||

* '''Novelty:''' Automated runtime introspection | |||

** Provide monitoring: power/network utilization | |||

** Machine Learning: identify common characteristics | |||

** Resource management: including dark silicon | |||

* '''Leverage:''' Performance/energy counters | |||

** Integrated Performance Monitoring (IPM) | |||

** Roofline formalism | |||

** Performance/energy counters | |||

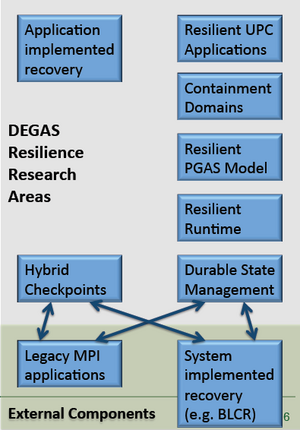

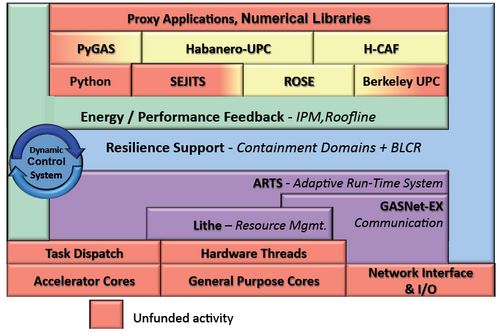

== Software Stack == | == Software Stack == | ||

[[File:DEGAS-Software-Stack.png|500px]] | |||

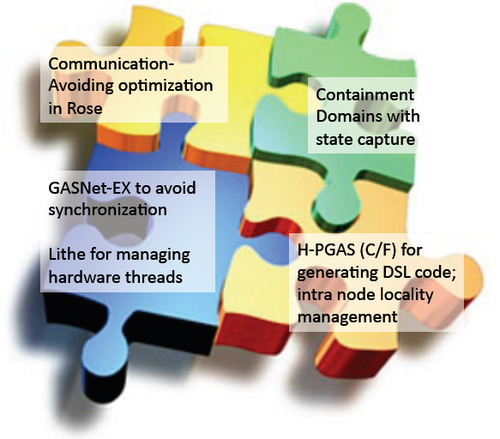

== DEGAS Pieces of the Puzzle == | |||

[[File:DEGAS-Puzzle.png|500px]] | |||

Revision as of 21:56, February 6, 2013

| DEGAS | |

|---|---|

| File:Your-team-logo.png | |

| Team Members | LBNL, Rice U., UC Berkeley, UT Austin, LLNL, NCSU |

| PI | Katherine Yelick (LBNL)) |

| Co-PIs | Vivek Sarkar (Rice U.), James Demmel (UC Berkeley),

Mattan Erez (UT Austin), Dan Quinlan (LLNL) |

| Website | team website |

| Download | {{{download}}} |

Description about your project goes here.....

Team Members

- Lawrence Berkeley National Laboratory (LBNL)

- Rice University

- University of California, Berkeley

- University of Texas at Austin

- Lawrence Livermore National Laboratory (LLNL)

- North Carolina State University (NCSU)

Mission

Mission Statement: To ensure the broad success of Exascale systems through a unified programming model that is productive, scalable, portable, and interoperable, and meets the unique Exascale demands of energy efficiency and resilience.

Goals & Objectives

- Scalability: Billion‐way concurrency, thousand‐way on chip with new architectures

- Programmability: Convenient programming through a global address space and high‐level abstractions for parallelism, data movement and resilience

- Performance Portability: Ensure applications can be moved across diverse machines using implicit (automatic) compiler optimizations and runtime adaptation

- Resilience: Integrated language support for capturing state and recovering from faults

- Energy Efficiency: Avoid communication, which will dominate energy costs, and adapt to performance heterogeneity due to system-‐level energy management

- Interoperability: Encourage use of languages and features through incremental adoption

Programming Models

Two Distinct Parallel Programming Questions

- What is the parallel control model?

- What is the model for sharing/communication?

Applications Drive New Programming Models

- Message Passing Programming

- Divide up domain in pieces

- Compute one piece and exchange

- MPI and many libraries

- Global Address Space Programming

- Each start computing

- Grab whatever/whenever

- UPC, CAF, X10, Chapel, Fortress, Titanium, GlobalArrays

Hierarchical Programming Model

- Goal: Programmability of exascale applications while providing scalability, locality, energy efficiency, resilience, and portability

- Implicit constructs: parallel multidimensional loops, global distributed data structures, adaptation for performance heterogeneity

- Explicit constructs: asynchronous tasks, phaser synchronization, locality

- Built on scalability, performance, and asynchrony of PGAS models

- Language experience from UPC, Habanero‐C, Co‐Array Fortran, Titanium

- Both intra and inter‐node; focus is on node model

- Languages demonstrate DEGAS programming model

- Habanero‐UPC: Habanero’s intra‐node model with UPC’s inter‐node model

- Hierarchical Co‐Array Fortran (CAF): CAF for on‐chip scaling and more

- Exploration of high level languages: E.g., Python extended with H‐PGAS

- Language‐independent H‐PGAS Features:

- Hierarchical distributed arrays, asynchronous tasks, and compiler specialization for hybrid (task/loop) parallelism and heterogeneity

- Semantic guarantees for deadlock avoidance, determinism, etc.

- Asynchronous collectives, function shipping, and hierarchical places

- End‐to‐end support for asynchrony (messaging, tasking, bandwidth utilization through concurrency)

- Early concept exploration for applications and benchmarks

Communication-Avoiding Compilers

- Goal: massive parallelism, deep memory and network hierarchies, plus functional and performance heterogeneity

- Fine‐grained task and data parallelism: enable performance portability

- Heterogeneity: guided by functional, energy and performance characteristics

- Energy efficiency: minimize data movement and hooks to runtime adaptation

- Programmability: manage details of memory, heterogeneity, and containment

- Scalability: communication and synchronization hiding through asynchrony

- H-PGAS into the Node

- Communication is all data movement

- Build on code‐generation infrastructure

- ROSE for H‐CAF and Communication‐Avoidance optimizations

- BUPC and Habanero‐C; Zoltan

- Additional theory of CA code generation

Exascale Programming: Support for Future Algorithms

- Approach: “Rethink” algorithms to optimize for data movement

- New class of communication‐optimal algorithms

- Most codes are not bandwidth limited, but many should be

- Challenges: How general are these algorithms?

- Can they be automated and for what types of loops?

- How much benefit is there in practice?

Adaptive Runtime Systems (ARTS)

- Goal: Adaptive runtime for manycore systems that are hierarchical, heterogeneous and provide asymmetric performance

- Reactive and proactive control: for utilization and energy efficiency

- Integrated tasking and communication: for hybrid programming

- Sharing of hardware threads: required for library interoperability

- Novelty: Scalable control; integrated tasking with communication

- Adaptation: Runtime annotated with performance history/intentions

- Performance models: Guide runtime optimizations, specialization

- Hierarchical: Resource/energy

- Tunable control: Locality/load balance

- Leverages: Existing runtimes

- Lithe scheduler composition; Juggle

- BUPC and Habanero‐C runtimes

Synchronization Avoidance vs Resource Management

- Management of critical resources will be more important:

- Memory and network bandwidth limited by cost and energy

- Capacity limited at many levels: network buffers at interfaces, internal network congestion are real and growing problems

- Can runtimes manage these or do users need to help?

- Adaptation based on history and (user‐supplied) intent?

- Where will bottlenecks be for a given architecture and application?

Lith Scheduling Abstraction: "Harts" (Hardware Threads)

Lightweight Communication (GASNet-EX)

- Goal: Maximize bandwidth use with lightweight communication

- One‐sided communication: to avoid over‐synchronization

- Active‐Messages: for productivity and portability

- Interoperability: with MPI and threading layers

- Novelty:

- Congestion management: for 1‐sided communication with ARTS

- Hierarchical: communication management for H‐PGAS

- Resilience: globally consist states and fine‐grained fault recovery

- Progress: new models for scalability and interoperatbility

- Leverage GASNet (redesigned):

- Major changes for on‐chip interconnects

- Each network has unique opportunities

Resilience through Containment Domains

- Goal: Provide a resilient runtime for PGAS applications

- Applications should be able to customize resilience to their needs

- Resilient runtime that provides easy‐to‐use mechanisms

- Novelty: Single analyzable abstraction for resilience

- PGAS Resilience consistency model

- Directed and hierarchical preservation

- Global or localized recovery

- Algorithm and system‐specific detection, elision, and recovery

- Leverage: Combined superset of prior approaches

- Fast checkpoints for large bulk updates

- Journal for small frequent updates

- Hierarchical checkpoint‐restart

- OS‐level save and restore

- Distributed recovery

Resilience: Research Questions

1. How to define consistent (i.e. allowable) states in the PGAS model?

- Theory well understood for fail‐stop message‐passing, but not PGAS.

2. How do we discover consistent states once we've defined them?

- Containment domains offer a new approach, beyond conventional sync-and‐stop algorithms.

3. How do we reconstruct consistent states after a failure?

- Explore low overhead techniques that minimize effort required by applications programmers.

- Leverage BLCR, GASnet, Berkeley UPC for development, and use Containment Domains as prototype API for requirements discovery

Energy and Performance Feedback

- Goal: Monitoring and feedback of performance and energy for online and offline optimization

- Collect and distill: performance/energy/timing data

- Identify and report bottlenecks: through summarization/visualization

- Provide mechanisms: for autonomous runtime adaptation

- Novelty: Automated runtime introspection

- Provide monitoring: power/network utilization

- Machine Learning: identify common characteristics

- Resource management: including dark silicon

- Leverage: Performance/energy counters

- Integrated Performance Monitoring (IPM)

- Roofline formalism

- Performance/energy counters

Software Stack