D-TEC

From Modelado Foundation

| D-TEC | |

|---|---|

| File:Your-team-logo.png | |

| Team Members | List of team members |

| PI | Daniel J. Quinlan (LLNL) |

| Co-PIs | Saman Amarasinghe (MIT), Armando Solar‐Lezama (MIT), Adam Chlipala (MIT), Srinivas Devadas (MIT), Una‐May O’Reilly (MIT), Nir Shavit (MIT), Youssef Marzouk (MIT), John Mellor‐Crummey (Rice U.), Vivek Sarkar (Rice U.), Vijay Saraswat (IBM), David Grove (IBM), P. Sadayappan (OSU), Atanas Rountev (OSU), Ras Bodik (UC Berkeley), Craig Rasmussen (U. of Oregon), Phil Colella (LBNL), Scott Baden (UC San Diego) |

| Website | team website |

| Download | {{{download}}} |

DSL Technology for Exascale Computing or D-TEC

Domain Specific Languages (DSLs) are a tranformational technology that capture expert knowledge about applica@on domains. For the domain scientist, the DSL provides a view of the high‐level programming model. The DSL compiler captures expert knowledge about how to map high‐level abstractions to different architectures. The DSL compiler’s analysis and transformations are complemented by the general compiler analysis and transformations shared by general purpose languages.

- There are different types of DSLs:

- Embedded DSLs: Have custom compiler support for high level abstractions defined in a host language (abstractions defined via a library, for example)

- General DSLs (syntax extended): Have their own syntax and grammar; can be full languages, but defined to address a narrowly defined domain

- DSL design is a responsibility shared between application domain and algorithm scientists

- Extraction of abstractions requires significant application and algorithm expertise

- We have an application team to:

- provide expertise that will ground our DSL research

- ensure its relevance to DOE and enable impact by the end of three years

Team Members

- Lawrence Livermore National Laboratory (LLNL)

- Massachusetts Institute of Technology (MIT)

- Rice University

- IBM

- Ohio State University (OSU)

- University of California, Berkeley

- University of Oregon

- Lawrence Berkeley National Laboratory

- University of California, San Diego

Goals and Objectives

D‐TEC Goal: Making DSLs Effective for Exascale

- We address all parts of the Exascale Stack:

- Languages (DSLs): define and build several DSLs economically

- Compilers: define and demonstrate the analysis and optimizations required to build DSLs

- Parameterized Abstract Machine: define how the hardware is evaluated to provide inputs to the compiler and runtime

- Runtime System: define a runtime system and resource management support for DSLs

- Tools: design and use tools to communicate to specific levels of abstraction in the DSLs

- We will provide effective performance by addressing exascale challenges:

- Scalability: deeply integrated with state‐of‐art X10 scaling framework

- Programmability: build DSLs around high levels of abstraction for specific domains

- Performance Portability: DSL compilers give greater flexibility to the code generation for diverse architectures

- Resilience: define compiler and runtime technology to make code resilient

- Energy Efficiency: machine learning and autotuning will drive energy efficiency

- Correctness: formal methods technologies required to verify DSL transformations

- Heterogeneity: demonstrate how to automatically generate lower level multi‐ISA code

- Our approach includes interoperability and a migration strategy:

- Interoperability with MPI + X: demonstrate embedding of DSLs into MPI + X applications

- Migration for Existing Code: demonstrate source‐to-source technology to migrate existing code

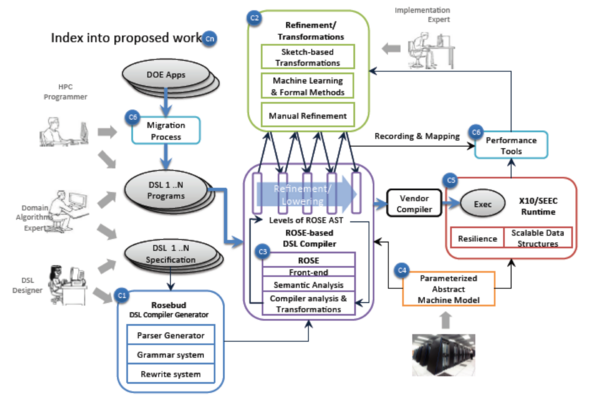

The D‐TEC approach addresses the full Exascale workflow

- Discovery of domain specific abstractions from proxy‐apps by application and algorithm experts

- (C1 & C2) Defining Domain Specific Languages (DSLs)

- The role of the DSL is to encapsulate expert knowledge

- About the problem domain

- The DSL compiler encapsulates how to optimize code for that domain on new architectures

- Rosebud used to define DSLs (a novel framework for joint optimization of mixed DSLs)

- DSL specification is used to generate a "DSL plug‐in” for Rosebud's DSL compiler

- Supports both embedded and general DSLs and multiple DSLs in one host‐language source file

- DSL optimization is done via cost‐based search over the space of possible rewritings

- Costs are domain‐specific, based on shared abstract machine model + ROSE analysis results

- Cross‐DSL optimization occurs naturally via search of combined rewriting space

- Sketching used to define DSLs (cutting‐edge aspect of our proposal)

- Series of manual refinements steps (code rewrites) define the transformations

- Equivalence checking between steps to verify correctness

- The series of transformations define the DSL compiler using ROSE

- Machine learning is used to drive optimizations

- Both approaches will leverage the common ROSE infrastructure

- Both approaches will leverage the SEEC enhanced X10 runtime system

- The role of the DSL is to encapsulate expert knowledge

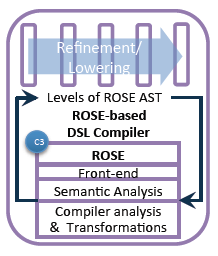

- (C3) DSL Compiler

- Leverages ROSE compiler throughout

- (C4) Parameterized Abstract Machine

- Extraction of machine characteristics

- (C5) Runtime System

- Leverages X10 and extends it with SEEC support

- (C6) Tools

- We will define source-to‐source migration tools

- We will define the mappings between DSL layers to support future tools

Software Stack

Rosebud

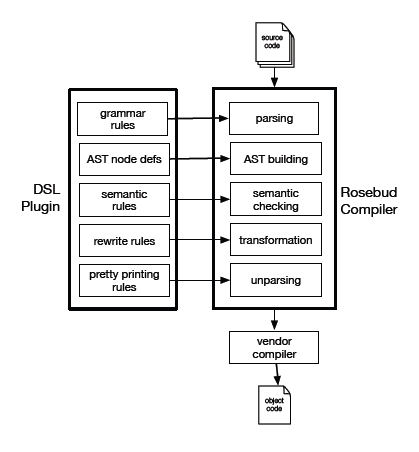

Rosebud Overview

- Unified framework for DSL implementation

- all aspects: parsing, analysis, optimization, code generation

- all types: embedded, custom‐syntax, standalone

- Modular development and use of DSLs

- textual DSL description => plug‐in to ROSE DSL Compiler

- plug‐ins developed separately from ROSE and each other

- Knowledge‐based optimization of DSL programs

- plug‐in encapsulates expert optimization knowledge

- ROSE supplies conventional compiler optimizations

- Flexible code generation

- DSL lowered to any ROSE host language

- DSL compiled directly to (portable) machine code via LLVM

Rosebud Implementation

- DSL front end

- SGLR parser + predefined host‐language grammars

- attribute grammar + ROSE extensible AST and analysis

- DSL optimizer

- declarative rewriting system + procedural hooks to ROSE

- cost‐based heuristic search of implementation space

- domain‐specific costs based on abstract machine model

- cross‐DSL optimization arises naturally from joint search space

- DSL code generator

- ROSE host language unparsers

- ROSE AST => LLVM SSA code

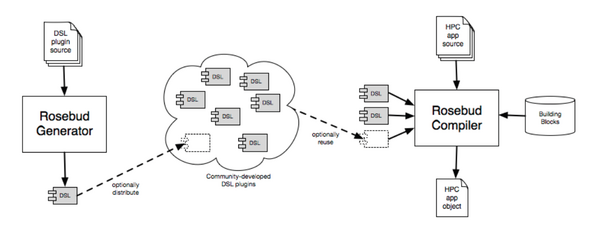

Rosebud Plug-ins

- Plug‐ins developed separately from ROSE and each other

- Plug‐ins distributed in source or object form

- Selected plug‐ins supplied to Rosebud DSL Compiler to compile mixed DSLs in a host language source file

Rosebud DSL Compiler

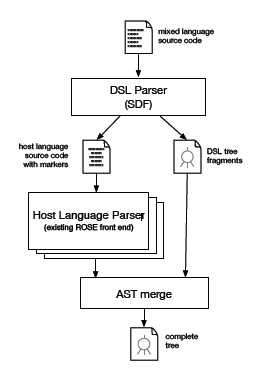

Two‐phase parsing for DSL language support

- Host language + multiple DSLs in the same source file

- expressive custom notations

- familiar general-purpose language

- Phase 1: extract and parse DSLs

- via Stratego SDF parsing system

- Phase 2: parse host language

- via existing ROSE front ends

- Merge DSL tree fragments into host language AST

- DSL plug-ins provide custom tree nodes and semantic analysis

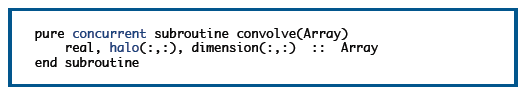

LOPe Programming Model

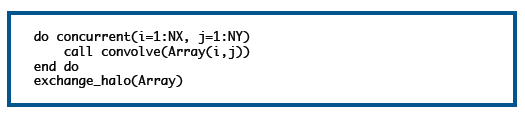

LOPe programming model is easily expressed in Fortran because of syntax for arrays

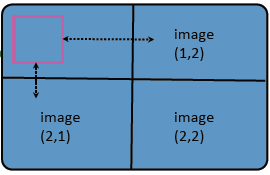

- Halo attribute added to arrays

- HALO(1:*:1, 1:*:1)

- specifies one border cell on each side of a two‐dimensional array

- * implies "stuff" in the middle

- Halos are logical cells not necessarily physically part of the array

- Halos can be communicated with coarrays

- DIMENSION(:,:)[:,:]

- halo region in pink

- logically extends to neighbor processors

- exchange_halo(Array)

LOPe programming model is easily expressed in Fortran because of syntax for concurrency

- Concurrent attribute added to procedures

- restricted semantics for array element access to avoid race conditions

- copy halo in, write single element out (visible after all threads exit)

- Called from within a DO CONCURRENT loop

Transformation (via ROSE) of a LOPe program to OpenCL allows execution on a GPU

Compiler Research is essential for DSLs (C3)

The DSL compiler captures expert knowledge about how to optimize high‐level abstractions to different architectures and is complemented by general compiler analysis and transformations such as that shared by general purpose language compilers. Architecture specific features are reasoned about through machine learning and/or the use of a parameterized abstract machine model that can be tailored to different machines.

- We will leverage existing technologies:

- Source‐to‐source technology in ROSE (LLNL and Rice)

- X10 front‐end for connection to ROSE (IBM)

- LLVM as low level IR in ROSE (LLNL and Rice)

- Polyhedral analysis to support optimizations (OSU)

- Machine learning to drive optimizations (MIT)

- Correctness checking (MIT and UCB)

- We will develop new technologies:

- Rosebud DSL specification

- DSL specific analysis and optimizations

- Automated DSL compiler generation

- X10 support in ROSE

- Define mappings between DSL layers to compiler analysis

- Refinement using equivalence checking

- Verification for transformations

- We will advance the state‐of‐the‐art:

- Formal methods use for HPC

- Generation of DSLs for productivity and performance portability

- Extending/Using polyhedral analysis to drive code generation for heterogeneous architectures

- Exascale challenges:

- Scalability: code generation for X10/SEEC and Scalable Data Structures, program synthesis

- Programmability: two approaches to DSL construction, automated equivalence checking

- Performance Portability: Using parameterized abstract machines, machine learning, auto‐tuning of refinement search spaces

- Resilience: Compiler‐based software TMR

- Energy Efficiency: using machine learning

- Interoperability and Migration Plans:

- Interoperability: A single compiler IR supports reusing analysis and transformations

- Migration Plan: Using source‐to‐source technology permits leveraging the vendor’s compiler