D-TEC

From Modelado Foundation

| D-TEC | |

|---|---|

| |

| Team Members | LLNL, MIT, Rice U., IBM, OSU, UC Berkeley, U. of Oregon, LBNL, UC San Diego |

| PI | Dan Quinlan |

| Co-PIs | ??? |

| Website | http://dtec-xstack.org/ |

| Download | http://dtec-xstack.org/?page_id=4 |

DSL Technology for Exascale Computing or D-TEC

Domain Specific Languages (DSLs) are a tranformational technology that capture expert knowledge about applica@on domains. For the domain scientist, the DSL provides a view of the high‐level programming model. The DSL compiler captures expert knowledge about how to map high‐level abstractions to different architectures. The DSL compiler’s analysis and transformations are complemented by the general compiler analysis and transformations shared by general purpose languages.

- There are different types of DSLs:

- Embedded DSLs: Have custom compiler support for high level abstractions defined in a host language (abstractions defined via a library, for example)

- General DSLs (syntax extended): Have their own syntax and grammar; can be full languages, but defined to address a narrowly defined domain

- DSL design is a responsibility shared between application domain and algorithm scientists

- Extraction of abstractions requires significant application and algorithm expertise

- We have an application team to:

- provide expertise that will ground our DSL research

- ensure its relevance to DOE and enable impact by the end of three years

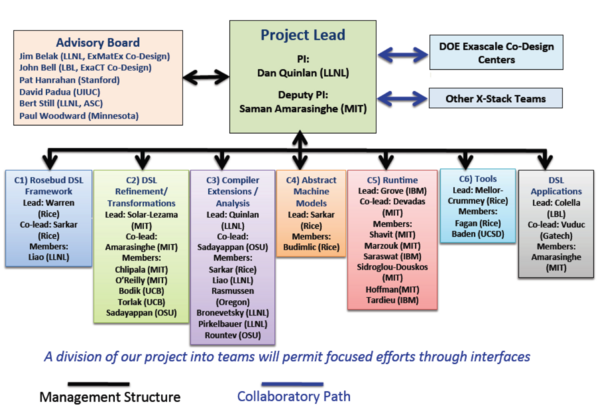

Team Members

- Lawrence Livermore National Laboratory (LLNL): Daniel J. Quinlan (Lead PI)

- Massachusetts Institute of Technology (MIT): Saman Amarasinghe, Armando Solar‐Lezama, Adam Chlipala, Srinivas Devadas, Una‐May O’Reilly, Nir Shavit, Youssef Marzouk

- Rice University: John Mellor‐Crummey, Vivek Sarkar

- IBM: Vijay Saraswat, David Grove

- Ohio State University (OSU): P. Sadayappan, Atanas Rountev

- University of California, Berkeley: Ras Bodik

- University of Oregon: Craig Rasmussen

- Lawrence Berkeley National Laboratory (LBNL): Phil Colella

- University of California, San Diego: Scott Baden

See Media:dtec-contacts.xlsx for team member contact information.

Project Impact

Overview

Goals and Objectives

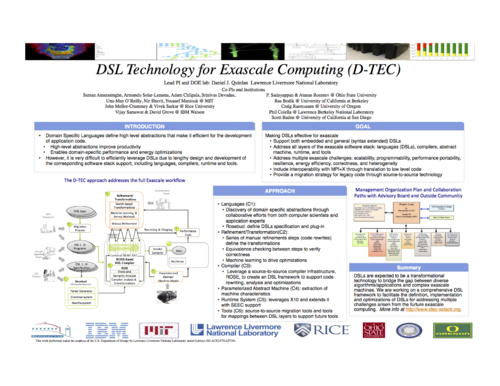

D‐TEC Goal: Making DSLs Effective for Exascale

- We address all parts of the Exascale Stack:

- Languages (DSLs): define and build several DSLs economically

- Compilers: define and demonstrate the analysis and optimizations required to build DSLs

- Parameterized Abstract Machine: define how the hardware is evaluated to provide inputs to the compiler and runtime

- Runtime System: define a runtime system and resource management support for DSLs

- Tools: design and use tools to communicate to specific levels of abstraction in the DSLs

- We will provide effective performance by addressing exascale challenges:

- Scalability: deeply integrated with state‐of‐art X10 scaling framework

- Programmability: build DSLs around high levels of abstraction for specific domains

- Performance Portability: DSL compilers give greater flexibility to the code generation for diverse architectures

- Resilience: define compiler and runtime technology to make code resilient

- Energy Efficiency: machine learning and autotuning will drive energy efficiency

- Correctness: formal methods technologies required to verify DSL transformations

- Heterogeneity: demonstrate how to automatically generate lower level multi‐ISA code

- Our approach includes interoperability and a migration strategy:

- Interoperability with MPI + X: demonstrate embedding of DSLs into MPI + X applications

- Migration for Existing Code: demonstrate source‐to-source technology to migrate existing code

Poster

Quad Chart

Download Media:DTEC-Quad Chart_and_Highlight_v6.pdf.

Two Pager

Download Media:D-TEC_2013_TwoPager_v1.pdf.

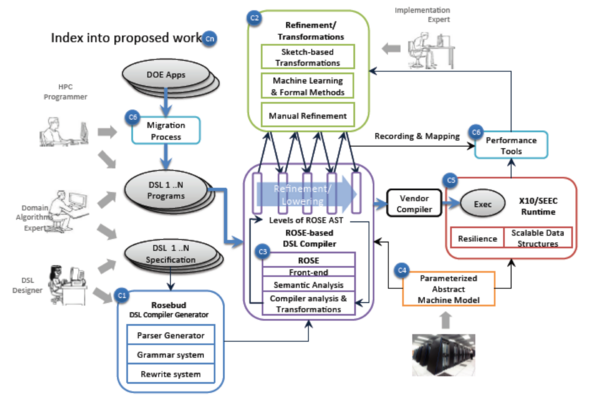

The D‐TEC approach addresses the full Exascale workflow

- Discovery of domain specific abstractions from proxy‐apps by application and algorithm experts

- (C1 & C2) Defining Domain Specific Languages (DSLs)

- The role of the DSL is to encapsulate expert knowledge

- About the problem domain

- The DSL compiler encapsulates how to optimize code for that domain on new architectures

- Rosebud used to define DSLs (a novel framework for joint optimization of mixed DSLs)

- DSL specification is used to generate a "DSL plug‐in” for Rosebud's DSL compiler

- Supports both embedded and general DSLs and multiple DSLs in one host‐language source file

- DSL optimization is done via cost‐based search over the space of possible rewritings

- Costs are domain‐specific, based on shared abstract machine model + ROSE analysis results

- Cross‐DSL optimization occurs naturally via search of combined rewriting space

- Sketching used to define DSLs (cutting‐edge aspect of our proposal)

- Series of manual refinements steps (code rewrites) define the transformations

- Equivalence checking between steps to verify correctness

- The series of transformations define the DSL compiler using ROSE

- Machine learning is used to drive optimizations

- Both approaches will leverage the common ROSE infrastructure

- Both approaches will leverage the SEEC enhanced X10 runtime system

- The role of the DSL is to encapsulate expert knowledge

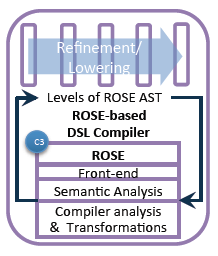

- (C3) DSL Compiler

- Leverages ROSE compiler throughout

- (C4) Parameterized Abstract Machine

- Extraction of machine characteristics

- (C5) Runtime System

- Leverages X10 and extends it with SEEC support

- (C6) Tools

- We will define source-to‐source migration tools

- We will define the mappings between DSL layers to support future tools

Software Stack

Rosebud

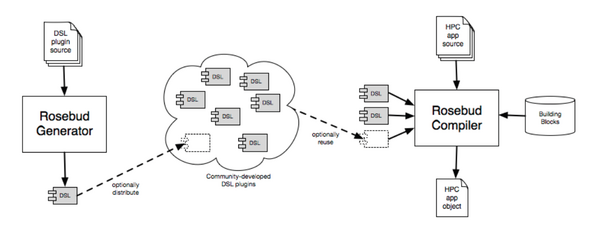

Rosebud Overview

- Unified framework for DSL implementation

- all aspects: parsing, analysis, optimization, code generation

- all types: embedded, custom‐syntax, standalone

- Modular development and use of DSLs

- textual DSL description => plug‐in to ROSE DSL Compiler

- plug‐ins developed separately from ROSE and each other

- Knowledge‐based optimization of DSL programs

- plug‐in encapsulates expert optimization knowledge

- ROSE supplies conventional compiler optimizations

- Flexible code generation

- DSL lowered to any ROSE host language

- DSL compiled directly to (portable) machine code via LLVM

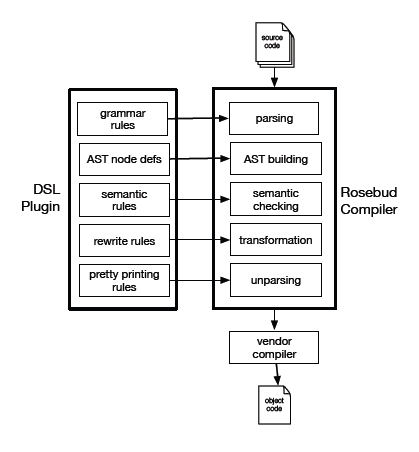

Rosebud Implementation

- DSL front end

- SGLR parser + predefined host‐language grammars

- attribute grammar + ROSE extensible AST and analysis

- DSL optimizer

- declarative rewriting system + procedural hooks to ROSE

- cost‐based heuristic search of implementation space

- domain‐specific costs based on abstract machine model

- cross‐DSL optimization arises naturally from joint search space

- DSL code generator

- ROSE host language unparsers

- ROSE AST => LLVM SSA code

Rosebud Plug-ins

- Plug‐ins developed separately from ROSE and each other

- Plug‐ins distributed in source or object form

- Selected plug‐ins supplied to Rosebud DSL Compiler to compile mixed DSLs in a host language source file

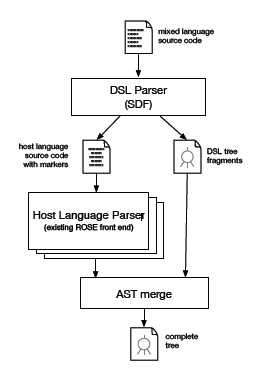

Rosebud DSL Compiler

Two‐phase parsing for DSL language support

- Host language + multiple DSLs in the same source file

- expressive custom notations

- familiar general-purpose language

- Phase 1: extract and parse DSLs

- via Stratego SDF parsing system

- Phase 2: parse host language

- via existing ROSE front ends

- Merge DSL tree fragments into host language AST

- DSL plug-ins provide custom tree nodes and semantic analysis

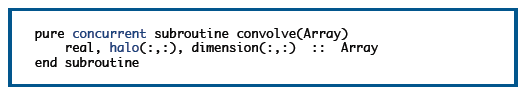

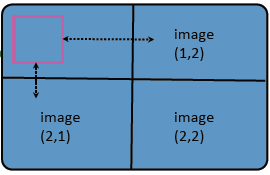

LOPe Programming Model

LOPe programming model is easily expressed in Fortran because of syntax for arrays

- Halo attribute added to arrays

- HALO(1:*:1, 1:*:1)

- specifies one border cell on each side of a two‐dimensional array

- * implies "stuff" in the middle

- Halos are logical cells not necessarily physically part of the array

- Halos can be communicated with coarrays

- DIMENSION(:,:)[:,:]

- halo region in pink

- logically extends to neighbor processors

- exchange_halo(Array)

LOPe programming model is easily expressed in Fortran because of syntax for concurrency

- Concurrent attribute added to procedures

- restricted semantics for array element access to avoid race conditions

- copy halo in, write single element out (visible after all threads exit)

- Called from within a DO CONCURRENT loop

Transformation (via ROSE) of a LOPe program to OpenCL allows execution on a GPU

Compiler Research is essential for DSLs (C3)

The DSL compiler captures expert knowledge about how to optimize high‐level abstractions to different architectures and is complemented by general compiler analysis and transformations such as that shared by general purpose language compilers. Architecture specific features are reasoned about through machine learning and/or the use of a parameterized abstract machine model that can be tailored to different machines.

- We will leverage existing technologies:

- Source‐to‐source technology in ROSE (LLNL and Rice)

- X10 front‐end for connection to ROSE (IBM)

- LLVM as low level IR in ROSE (LLNL and Rice)

- Polyhedral analysis to support optimizations (OSU)

- Machine learning to drive optimizations (MIT)

- Correctness checking (MIT and UCB)

- We will develop new technologies:

- Rosebud DSL specification

- DSL specific analysis and optimizations

- Automated DSL compiler generation

- X10 support in ROSE

- Define mappings between DSL layers to compiler analysis

- Refinement using equivalence checking

- Verification for transformations

- We will advance the state‐of‐the‐art:

- Formal methods use for HPC

- Generation of DSLs for productivity and performance portability

- Extending/Using polyhedral analysis to drive code generation for heterogeneous architectures

- Exascale challenges:

- Scalability: code generation for X10/SEEC and Scalable Data Structures, program synthesis

- Programmability: two approaches to DSL construction, automated equivalence checking

- Performance Portability: Using parameterized abstract machines, machine learning, auto‐tuning of refinement search spaces

- Resilience: Compiler‐based software TMR

- Energy Efficiency: using machine learning

- Interoperability and Migration Plans:

- Interoperability: A single compiler IR supports reusing analysis and transformations

- Migration Plan: Using source‐to‐source technology permits leveraging the vendor’s compiler

Preliminary Experimental Results (Habanero Hierarchical Place Tree)

- Actual hardware: four quad-core Xeon sockets; each socket contains two core-pairs; each core shares an L2 cache

- Possible abstract machine models:

- Use Habanero Hierarchal Place Tree (HPT) abstraction for these results

- Experiment with three HPT abstractions of same hardware:

- 1x16 --- one root place with 16 leaf places <This model focuses on L1 Cache locality>

- 8x2 --- 8 non-leaf places, each of which has 2 leaf places <This model focuses on the L2 cache shared by a core-pair>

- 16x1 --- like 1x16, except that it ignores the root place

- Preliminary execution times for SOR2D (size C) on above hardware underscore the importance of selecting the right abstraction for a given application-platform combination

- 1x16 --- 1.14 seconds

- 8x2 --- 0.61 seconds

- 16x1 --- 1.90 seconds

X10

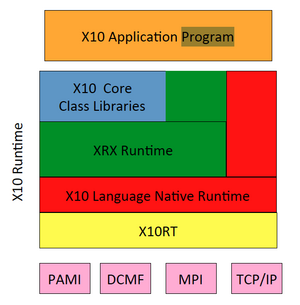

Current X10 Runtime Software Stack

- Core Class Libraries

- Fundamental classes & primitives, Arrays, core I/O, collections, etc

- Written in X10; compiled to C++ or Java

- XRX (X10 Runtime in X10)

- APGAS functionality

- Concurrency: async/finish

- Distribution: Places/at

- Written in X10; compiled to C++ or Java

- APGAS functionality

- X10 Language Native Runtime

- Runtime support for core sequential X10 language features

- Two versions: C++ and Java

- X10RT

- Active messages, collectives, bulk data transfer

- Implemented in C

- Abstracts/unifies network layers (PAMI, DCMF, MPI, etc) to enable X10 on a range of transports

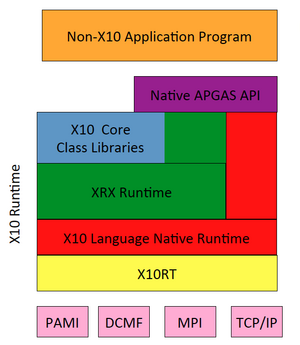

Leveraging X10 Runtime for Native Applications

Native APGAS API

- Provides C++/C APIs to APGAS functionality of X10 Runtime

- Concurrency: async/finish

- Distribution: Places/at

- Additionally exposes subset of X10RT APIs for use by native applications

- Collective operations

- One‐sided active messages

- Allows non‐X10 applications to leverage X10 runtime facilities via a library interface

Scalability of X10 Runtime

- Scalability

- X10 programs have achieved good scaling at > 32k cores on P7IH (PERCS) and up to 8k cores on BlueGene/P

- Support for Intra‐node scalability

- async/finish enable high‐level programming of fine‐grained concurrency

- Advanced features (clocks, collecting finish) support determinate programming of common concurrency idioms

- Workstealing implementation: both Fork/Join & Cilk‐style

- APGAS programing model extended to GPUs

- X10 kernels can be compiled to CUDA

- compiler-‐mediated data/control transfer between CPU/GPU

- Support for Inter‐node scalability

- Places/at; collectives; one‐sided active messages; asynchronous bulk data transfer APIs

- Utilizes available transports (PAMI, DCMF, MPI)

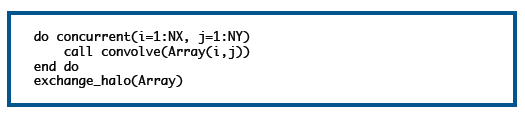

SEEC Runtime

- Understands high‐level goals

- E.g., performance, accuracy, power

- Makes observations

- Is app on current machine meeting goals?

- Understands actions

- Provided by opt. management, machine, uncertainty quantifica@on

- Makes decisions about how to take action given goals and current observations

- Uses control theory, machine learning, and possibly game theory

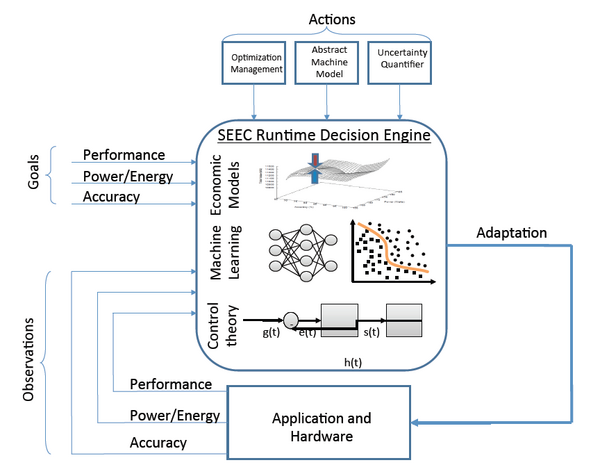

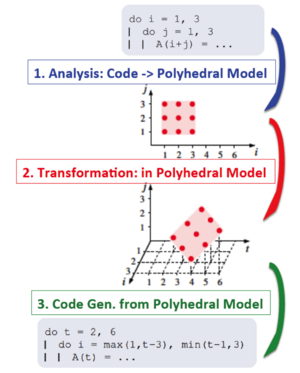

Polyhedral Compiler Transformations

- Advantages over standard AST‐based compiler frameworks

- Seamless support of imperfectly nested loops

- Handle symbolic loop bounds

- Powerful uniform model for composition of transformations

- Model‐driven optimization using the power of integer linear programming

- Work planned on D‐TEC project

- Leverage/integrate DSL properties in the optimization process

- Expose API for analysis and semantics‐preserving transformations of programs

- Multi‐target code generation using domain semantics and architecture characteristics

- Communication optimization using high‐level semantic information

- Address challenges in applying polyhedral transformations to complex DOE application codes

Multi‐target Domain‐specialized Code Generation

MIT Sketch

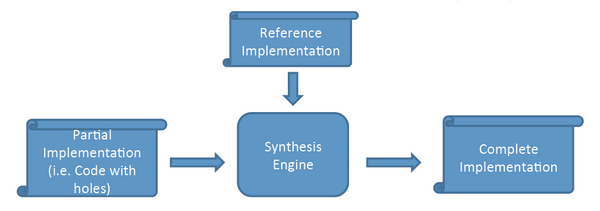

MIT Sketch: how does it work

- Synthesis engine works by elimination

- Partial implementation defines space of possible solutions

- Classes of incorrect solutions are eliminated by analyzing why particular incorrect solutions failed

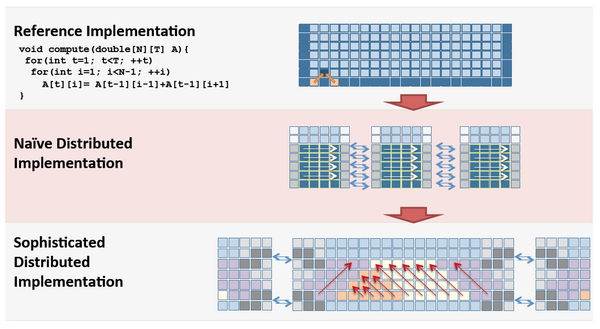

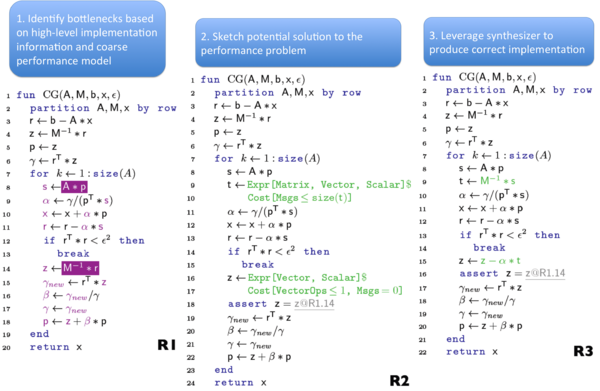

Sketching Enhanced Refinement: Low‐Level

- Synthesis simplifies manual refinement

- Sophisticated implementation is simple if we can elide low‐level details

- Automated equivalence checking helps avoid bugs in the refinement process

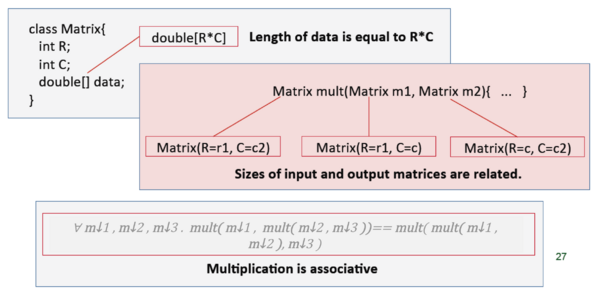

Sketching Enhanced Refinement: High‐Level

The role of constraints on types

- Constraints can appear on classes or functions

- Constraints allow locality of reasoning and simplify synthesis

- Examples:

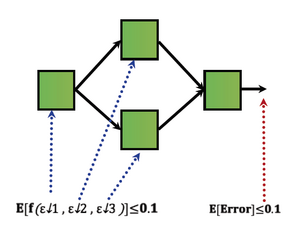

Fault Tolerance

- User defines bound on expected error

- UQ to determine how fault contribute to total error

- Represent total error a function of errors caused by transient faults (in individual tasks)

- Total error is a function of errors introduced in each faulty task

- Errors due to faults modeled as random noise

- Each random quantity _ε_i_ captures transient fault influence on tasks

Tools for Legacy Code Modernization

- Incrementally add DSL constructs to legacy codes

- Replace performance‐critical sections by DSLs

- Our “mixed‐DSLs + host language” architecture supports this

- Manual addition of DSL constructs is low risk

- Semi‐automatic addition of DSL constructs is promising

- Recognize opportunities for DSL constructs using same pattern‐matching as in rewriting system

- Human could direct, assist, verify, or veto

- Fully automatic rewriting of fragments to DSL constructs may be possible

- Benefits

- Higher performance using aggressive DSL optimization

- Performance portability without a complete rewrite

Tools for Understanding DSL Performance

- Challenges

- Huge semantic gap between embedded DSL and generated code

- Code generation for DSLs is opaque, debugging is hard, and fine‐grain performance attribution is unavailable

- Goal: Bridge semantic gap for debugging and performance tuning

- Approach

- Record information during program compilation

- two‐way mappings between every token in source and generated code

- transformation options, domain knowledge, cost models, and choices

- Monitor and attribute execution characteristics with instrumentation and sampling

- e.g., parallelism, resource consumption, contention, failure, scalability

- Map performance back to source, transformations, and domain knowledge

- Compensate for approximate cost models with empirical autotuning

- Record information during program compilation

- Technologies to be developed

- Strategies for maintaining mappings without overly burdening DSL implementers

- Strategies for tracking transformations, knowledge, and costs through compilation

- Techniques for exploring and explaining the roles of transformations and knowledge

- Algorithms for refining cost estimates with observed costs to support autotuning

Migrating Existing Codes

Benefits of custom, source to source translation

- Automatically restructure conventional code using a custom source‐to‐source translator ...

- ... that captures semantic knowledge of the application domain ... thereby improving performance

- Embedded Domain Specific Languages

- Automatically tolerate communication delays

- Squeeze out library overheads

- Library primitives → primitive language objects

Management Plan and Collaboration Paths with Advisory Board and Outside Community

D-TEC Products and Publications

Product List

- D-TEC compiler and verification research work, X10-ROSE support, Embedded DSL compilers, and ROSE connection to OpenTuner: http://www.rosecompiler.org/

- The Open Fortran Project’s (OFP) parser: https://github.com/OpenFortranProject/ofp-sdf .

- Rosebud: https://svn.rice.edu/r/rosebud .

- Halide: http://halide-lang.org

- OpenTuner: http://opentuner.org/

- The X10 toolchain, X10/APGAS runtime system, and the X10 proxy applications: http://x10-lang.org/

- Bamboo: http://cseweb.ucsd.edu/groups/hpcl/scg/BambooWebsite/

- PolyOpt is a ROSE plug-in distributed with ROSE and also available at: http://hpcrl.cse.ohio-state.edu/wiki/index.php/Polyhedral_Compilation

- Simit: http://groups.csail.mit.edu/commit/ .

- Sketch: http://people.csail.mit.edu/asolar/.

- Cloverleaf auto-converted using synthesis: http://people.csail.mit.edu/asolar/.

- Lulesh built using synthesis: http://people.csail.mit.edu/asolar/.

- MSL, the distributed synthesis language: at http://people.csail.mit.edu/asolar/.

- Rely: at http://people.csail.mit.edu/mcarbin/.

- Rosette, a Racket-based language for hosting solver-aided DSLs: http://homes.cs.washington.edu/~emina/rosette/.

- Chlorophyll: synthesis-aided DSL and compiler for spatial parallel architectures: http://pl.eecs.berkeley.edu/projects/chlorophyll/.

Publication List

- Markus Schordan, Pei-Hung Lin, Dan Quinlan, and Louis-Nol Pouchet. Verification of polyhedral optimizations with constant loop bounds infinite state space computations. In Tiziana Margaria and Bernhard Steffen, editors, Leveraging Applications of Formal Methods, Verification and Validation. Specialized Techniques and Applications, volume 8803 of Lecture Notes in Computer Science, pages 493{508. Springer Berlin Heidelberg, 2014.

- Chunhua Liao, Daniel J. Quinlan, Thomas Panas, and Bronis R. de Supinski. A rose-based openmp 3.0 research compiler supporting multiple runtime libraries. In Mitsuhisa Sato, ToshihiroHanawa, Matthias S. Muller, Barbara M. Chapman, and Bronis R. de Supinski, editors, IWOMP, volume 6132 of Lecture Notes in Computer Science, pages 15{28. Springer, 2010.

- Chunhua Liao, Yonghong Yan, Bronis R de Supinski, Daniel J Quinlan, and Barbara Chapman. Early experiences with the openmp accelerator model. In OpenMP in the Era of Low Power Devices and Accelerators, pages 84{98. Springer, 2013.

- Dan Quinlan and Chunhua Liao. The ROSE source-to-source compiler infrastructure. In Cetus Users and Compiler Infrastructure Workshop, Galveston Island, TX, USA, October 2011.

- Yonghong Yan, Pei-Hung Lin, Chunhua Liao, Bronis R. de Supinski, and Daniel J. Quinlan. Supporting multiple accelerators in high-level programming models. In Proceedings of the Sixth International Workshop on Programming Models and Applications for Multicores and Manycores, PMAM '15, pages 170{180, New York, NY, USA, 2015. ACM.

- Pei-Hung Lin, Chunhua Liao, Daniel J. Quinlan, and Stephen Guzik. Experiences of using the openmp accelerator model to port doe stencil applications, 2014. Poster presented at the Workshop on accelerator programming using directives, Nov. 17, 2014, New Orleans, LA.

- Markus Schordan, Pei-Hung Lin, Dan Quinlan, and Louis-Nol Pouchet. Verification of parallel polyhedral transformations with arbitrary constant loop bounds, 2015. In review process of EuroPar2015.

- Jonathan Ragan-Kelley. Decoupling Algorithms from the Organization of Computation for High Performance Image Processing. Ph.d. thesis, Massachusetts Institute of Technology, Cambridge,MA, June 2014.

- Jason Ansel. Autotuning Programs with Algorithmic Choice. Ph.d. thesis, Massachusetts Institute of Technology, Cambridge, MA, February 2014.

- Jeffrey Bosboom. Streamjit: A commensal compiler for high-performance stream programming. S.m. thesis, Massachusetts Institute of Technology, Cambridge, MA, June 2014.

- Eric Wong. Optimizations in stream programming for multimedia applications. M.eng. thesis, Massachusetts Institute of Technology, Cambridge, MA, Aug 2012.

- Phumpong Watanaprakornkul. Distributed data as a choice in petabricks. M.eng. thesis, Massachusetts Institute of Technology, Cambridge, MA, Jun 2012.

- Charith Mendis, Jeffrey Bosboom, Kevin Wu, Shoaib Kamil, Jonathan Ragan-Kelley, Sylvain Paris, Qin Zhao, and Saman Amarasinghe. Helium: Lifting high-performance stencil kernels from stripped x86 binaries to halide dsl code. In ACM SIGPLAN Conference on Programming Language Design and Implementation, June 2015.

- Jason Ansel, Shoaib Kamil, Kalyan Veeramachaneni, Jonathan Ragan-Kelley, Jeffrey Bosboom, Una-May O'Reilly, and Saman Amarasinghe. Opentuner: An extensible framework for program autotuning. In International Conference on Parallel Architectures and Compilation Techniques, Edmonton, Canada, August 2014.

- Jeffrey Bosboom, Sumanaruban Rajadurai, Weng-Fai Wong, and Saman Amarasinghe. Streamjit: A commensal compiler for high-performance stream programming. In ACM SIGPLAN Conference on Object-Oriented Programming Systems and Applications, Portland, OR, October 2014.

- Jonathan Ragan-Kelley, Connelly Barnes, Andrew Adams, Sylvain Paris, Fredo Durand, and Saman Amarasinghe. Halide: A language and compiler for optimizing parallelism, locality, and recomputation in image processing pipelines. In ACM SIGPLAN Conference on Programming Language Design and Implementation, Seattle, WA, June 2013.

- Phitchaya Mangpo Phothilimthana, Jason Ansel, Jonathan Ragan-Kelley, and Saman Amarasinghe. Portable performance on heterogeneous architectures. In The International Conference on Architectural Support for Programming Languages and Operating Systems, Houston, TX, March 2013.

- Maciej Pacula, Jason Ansel, Saman Amarasinghe, and Una-May O'Reilly. Hyperparameter tuning in bandit-based adaptive operator selection. In European Conference on the Applications of Evolutionary Computation, Malaga, Spain, Apr 2012.

- Jason Ansel, Maciej Pacula, Yee Lok Wong, Cy Chan, Marek Olszewski, Una-May O'Reilly, and Saman Amarasinghe. Siblingrivalry: Online autotuning through local competitions. In International Conference on Compilers Architecture and Synthesis for Embedded Systems, Tampere, Finland, Oct 2012.

- Jonathan Ragan-Kelley, Andrew Adams, Sylvain Paris, Marc Levoy, Saman Amarasinghe, and Fredo Durand. Decoupling algorithms from schedules for easy optimization of image processing pipelines. ACM Transactions on Graphics, 31(4), July 2012.

- Dan Alistarh, Patrick Eugster, Maurice Herlihy, Alexander Matveev, and Nir Shavit. Stacktrack: An automated transactional approach to concurrent memory reclamation. In Proceedings of the Ninth European Conference on Computer Systems, EuroSys '14, pages 25:1{25:14, New York, NY, USA, 2014. ACM.

- Jason Ansel, Shoaib Kamil, Kalyan Veeramachaneni, Una-May O'Reilly, and Saman Amarasinghe. Opentuner: An extensible framework for program autotuning. Technical Report MIT/CSAIL Technical Report MIT-CSAIL-TR-2013-026, Massachusetts Institute of Technology, Cambridge, MA, Nov 2013.

- Alexander Matveev and Nir Shavit. Reduced hardware norec: A safe and scalable hybrid transactional memory. In 20th International Conference on Architectural Support for Programming Languages and Operating Systems, ASPLOS 2015, Istanbul, Turkey, 2015. ACM.

- Sasa Misailovic, Michael Carbin, Sara Achour, Zichao Qi, and Martin C. Rinard. Chisel: Reliability- and accuracy-aware optimization of approximate computational kernels. In Proceedings of the 2014 ACM International Conference on Object Oriented Programming Systems Languages & Applications, OOPSLA '14, pages 309{328, New York, NY, USA, 2014. ACM.

- Zhilei Xu, Shoaib Kamil, and Armando Solar-Lezama. Msl: A synthesis enabled language for distributed implementations. In Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, SC '14, pages 311{322, Piscataway, NJ, USA, 2014. IEEE Press.

- F. Augustin and Y. ~ M. Marzouk. Uncertainty quantification in high performance computing (invited position paper). SIGPLAN Workshop on Probabilistic and Approximate Computing(APPROX), 2014.

- David Grove, Josh Milthorpe, and Olivier Tardieu. Supporting array programming in X10. In Proceedings of ACM SIGPLAN International Workshop on Libraries, Languages, and Compilers for Array Programming, ARRAY'14, pages 38:38{38:43, New York, NY, USA, 2014. ACM.

- Wei Zhang, Olivier Tardieu, David Grove, Benjamin Herta, Tomio Kamada, Vijay Saraswat, and Mikio Takeuchi. GLB: Lifeline-based global load balancing library in X10. In Proceedings of the First Workshop on Parallel Programming for Analytics Applications, PPAA '14, pages 31{40, New York, NY, USA, 2014. ACM.

- Olivier Tardieu, David Grove, Benjamin Herta, Tomio Kamada, Vijay Saraswat, Mikio Takeuchi, and Wei Zhang. X10 for Productivity and Performance at Scale: A Submission to the 2013 HPC Class II Challenge, October 2013.

- Craig Rasmussen, Matthew Sottile, Daniel Nagle, and Soren Rasmussen. Locally-oriented programming: A simple programming model for stencil-based computations on multi-level distributed memory architectures. In Proceedings of Euro-Par 2015 Parallel Processing, Lecture Notes in Computer Science. Springer International Publishing, 2015. Submitted, February 2015.

- Thomas Steel Henretty. Performance Optimization of Stencil Computations on Modern SIMD Architectures. PhD thesis, The Ohio State University, 2014.

- Justin Andrew Holewinski. Automatic Code Generation for Stencil Computations on GPU Architectures. PhD thesis, The Ohio State University, 2012.

- Mahesh Ravishankar. Automatic parallelization of loops with data dependent control flow and array access patterns. PhD thesis, The Ohio State University, 2014.

- Kevin Alan Stock. Vectorization and Register Reuse in High Performance Computing. PhD thesis, The Ohio State University, 2014.

- Tom Henretty, Richard Veras, Franz Franchetti, Louis-Noel Pouchet, J. Ramanujam, and P. Sadayappan. A stencil compiler for short-vector simd architectures. In Proceedings of the 27th International ACM Conference on International Conference on Supercomputing, ICS '13, pages 13{24, New York, NY, USA, 2013. ACM.

- Justin Holewinski, Louis-Noel Pouchet, and P. Sadayappan. High-performance code generation for stencil computations on gpu architectures. In Proceedings of the 26th ACM International Conference on Supercomputing, ICS '12, pages 311{320, New York, NY, USA, 2012. ACM.

- Louis-Noel Pouchet, Peng Zhang, P. Sadayappan, and Jason Cong. Polyhedral-based data reuse optimization for configurable computing. In Proceedings of the ACM/SIGDA International Symposium on Field Programmable Gate Arrays, FPGA '13, pages 29{38, New York, NY, USA, 2013. ACM.

- S. Rajbhandari, A. Nikam, Pai-Wei Lai, K. Stock, S. Krishnamoorthy, and P. Sadayappan. Cast: Contraction algorithm for symmetric tensors. In Parallel Processing (ICPP), 2014 43rd International Conference on, pages 261{272, Sept 2014.

- Samyam Rajbhandari, Akshay Nikam, Pai-Wei Lai, Kevin Stock, Sriram Krishnamoorthy, and P. Sadayappan. A communication-optimal framework for contracting distributed tensors. In Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, SC '14, pages 375{386, Piscataway, NJ, USA, 2014. IEEE Press.

- Mahesh Ravishankar, John Eisenlohr, Louis-Noel Pouchet, J. Ramanujam, Atanas Rountev, and P. Sadayappan. Code generation for parallel execution of a class of irregular loops on distributed memory systems. In Proceedings of the International Conference on High Performance Computing, Networking, Storage and Analysis, SC '12, pages 72:1{72:11, Los Alamitos, CA, USA, 2012. IEEE Computer Society Press.

- Mahesh Ravishankar, John Eisenlohr, Louis-Noel Pouchet, J. Ramanujam, Atanas Rountev, and P. Sadayappan. Automatic parallelization of a class of irregular loops for distributed memory systems. ACM Transactions on Parallel Computing, 1(1):7:1{7:37, September 2014.

- Mahesh Ravishankar, Roshan Dathathri, Venmugil Elango, Louis-Noel Pouchet, J Ramanujam, Atanas Rountev, and P Sadayappan. Distributed memory code generation for mixed irregular/regular computations. In Proceedings of the 20th ACM SIGPLAN Symposium on Principles and Practice of Parallel Programming, pages 65{75. ACM, 2015.

- Kevin Stock, Martin Kong, Tobias Grosser, Louis-Noel Pouchet, Fabrice Rastello, J. Ramanujam, and P. Sadayappan. A framework for enhancing data reuse via associative reordering. In Proceedings of the 35th ACM SIGPLAN Conference on Programming Language Design and Implementation, PLDI '14, pages 65{76, New York, NY, USA, 2014. ACM.

- Venmugil Elango, Fabrice Rastello, Louis-No?el Pouchet, J. Ramanujam, and P. Sadayappan. On characterizing the data movement complexity of computational dags for parallel execution. In Proceedings of the 26th ACM Symposium on Parallelism in Algorithms and Architectures, SPAA '14, pages 296{306, New York, NY, USA, 2014. ACM.

- Naznin Fauzia, Venmugil Elango, Mahesh Ravishankar, J. Ramanujam, Fabrice Rastello, Atanas Rountev, Louis-Noel Pouchet, and P. Sadayappan. Beyond reuse distance analysis: Dynamic analysis for characterization of data locality potential. ACM Trans. Archit. Code Optim., 10(4):53:1{53:29, Dec. 2013.

- Martin Kong, Richard Veras, Kevin Stock, Franz Franchetti, Louis-Noel Pouchet, and P. Sadayappan. When polyhedral transformations meet simd code generation. In Proceedings of the 34th ACM SIGPLAN Conference on Programming Language Design and Implementation, PLDI '13, pages 127{138, New York, NY, USA, 2013. ACM.

- Lai Wei and John Mellor-Crummey. Autotuning tensor transposition. In Proceedings of the 19th International Workshop on High-level Parallel Programming Models and Supportive Environments, May 2014.

- Vivek Sarkar. Jun Shirako, Louis-Noel Pouchet. Oil and water can mix: An integration of polyhedral and ast-based transformations. In IEEE Conference on High Performance Computing, Networking, Storage and Analysis (SC'14). IEEE, 2014.

- Vivek Sarkar. Prasanth Chatarasi, Jun Shirako. Polyhedral transformations of explicitly parallel programs. In 5th International Workshop on Polyhedral Compilation Techniques (IMPACT 2015). IEEE, 2015.

- Kamal Sharma. Locality Transformations of Computation and Data for Portable Performance. PhD thesis, Rice University, August 2014.

- Jun Shirako and Vivek Sarkar. Oil and water can mix! Experiences with integrating polyhedral and AST-based Transformations. In 17th Workshop on Compilers for Parallel Programming, July 2013.

- Jisheng Zhao, Michael Burke, and Vivek Sarkar. Rice ROSE Compositional Analysis and Transformation Framework (R2CAT). Technical report, LLNL Technical Report 590233, October 2012.

- Phitchaya Mangpo Phothilimthana, Tikhon Jelvis, Rohin Shah, Nishant Totla, Sarah Chasins, and Rastislav Bodik. Chlorophyll: synthesis-aided compiler for low-power spatial architectures. In O'Boyle and Pingali [58], page 42.

- Emina Torlak and Rastislav Bodik. A lightweight symbolic virtual machine for solver-aided host languages. In O'Boyle and Pingali [58], page 54.

- Rajeev Alur, Rastislav Bodik, Garvit Juniwal, Milo M. K. Martin, Mukund Raghothaman, Sanjit A. Seshia, Rishabh Singh, Armando Solar-Lezama, Emina Torlak, and Abhishek Udupa. Syntax-guided synthesis. In Formal Methods in Computer-Aided Design, FMCAD 2013, Portland, OR, USA, October 20-23, 2013, pages 1{8. IEEE, 2013.

- Emina Torlak and Rastislav Bodik. Growing solver-aided languages with rosette. In Antony L. Hosking, Patrick Th. Eugster, and Robert Hirschfeld, editors, ACM Symposium on New Ideas in Programming and Reflections on Software, Onward! 2013, part of SPLASH '13, Indianapolis, IN, USA, October 26-31, 2013, pages 135{152. ACM, 2013.

- Leo A. Meyerovich, Matthew E. Torok, Eric Atkinson, and Rastislav Bodik. Parallel schedule synthesis for attribute grammars. In Alex Nicolau, Xiaowei Shen, Saman P. Amarasinghe, and Richard W. Vuduc, editors, ACM SIGPLAN Symposium on Principles and Practice of Parallel Programming, PPoPP '13, Shenzhen, China, February 23-27, 2013, pages 187{196. ACM, 2013.

- Michael F. P. O'Boyle and Keshav Pingali, editors. ACM SIGPLAN Conference on Programming Language Design and Implementation, PLDI '14, Edinburgh, United Kingdom - June 09- 11, 2014. ACM, 2014.

- Tan Nguyen, Pietro Cicotti, Eric Bylaska, Dan Quinlan, and Scott B. Baden. Bamboo: translating mpi applications to a latency-tolerant, data-driven form. In Proceedings of the International Conference on High Performance Computing, Networking, Storage and Analysis, SC '12, pages 39:1{39:11, Los Alamitos, CA, USA, 2012. IEEE Computer Society Press.

- Tan Nguyen and Scott B. Baden. Bamboo-preliminary scaling results on multiple hybrid nodes of knights corner and sandy bridge processors. In Proc. WOLFHPC: Workshop on Domain-Specific Languages and High-Level Frameworks for HPC, SC13, The International Conference for High Performance Computing, Networking, Storage and Analysis, Denver CO, 2013.

DTEC Usage

| # | Project | Technology Readiness | TRL Level (1-9) | Downloads (Last 14 Days) | Visitors,(Last 14 Days) | Commits,(Last 12 Months) | Contributors (Last 12 Months) | OpenHub.net Analysis | Institutional Usage | University Usage | DTEC Deliverables | URL |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | ROSE | Open Source, used by LLNL ASC application teams and both internal and external research to DOE | 7 | 21 | 434 | 1808 | 16 | 28,464 total commits by 123 developers and 5M lines of code: https://www.openhub.net/p/rose-compiler | IBM, Intel, DOE LLNL, DOE LBL, DoD | MIT, University of Utah, Texas A&M, Rice, UCSD, Universify Of Oregon, University of Colorado, CalTech, UIUC | Source-to-source compiler Framework, including Rosetta IR node support, specific DSL tools, CodeThorn verification tools, and X10 language support | http://www.roseCompiler.org |

| 2 | Halide | 7 | 1251 | 595 | 10597 | 61 | Google, Adobe, Intel, Qualcomm, Facebook + more | Many... | http://halide-lang. org/ | |||

| 3 | OpenTuner | 6 | 51 | 59 | 330 | 11 | http://opentuner.org/ | |||||

| 4 | Rely Language | |||||||||||

| 5 | MSL - Synththesis Language/ Sketch Synthesizer | Open source, Released as part of the Sketch language. | ? | ? | 210 | 5 | Adobe | MIT, UW, Rice, Berkeley | Synthesis framework for SPMD kernels | http://people.csail.mit.edu/asolar | ||

| 6 | SImit Langauge | Language for physical simulations and sparse systems | 5 | 70 | 1284 | 2418 | 11 | Adobe | MIT, Berkely, Stanford, UT Austin, U Toronto, Texas A&M, | |||

| 7 | X10 Runtime | Open source language with compiler, runtime and IDE; used by research teams within IBM and international research institutes/universities; demonstrated at petascale in HPC challenge; substantial test suite and libraries and applications (see http://x10-lang.org/x10-community/applications.html) | 7 | 243 (SourceForge) | 164 (Github repo) | 606 | 14 | 27K commits made by 100 contributors and 700K lines of code: https://www.openhub.net/p/x10 | IBM, RIKEN, INRIA | http://x10-lang.org/x10-community/universities-using-x10.html University of Alberta, Australian National University, UCLA, Carnegie Mellon University, Columbia University, TU Dresden, University of Erlangen-Nuremberg, Georgetown University, Imperial College London, IMT Institute for Advanced Studies Lucca, IIT Madras, Karlsruhe Institute of Technology, University of Kassel, Kobe University, McGill University, University of Paris-1 Sorbonne/CRI, Universidad Nacional Autónoma de México, TU Munich, Tianjin University, Tokyo Institute of Technology, University of Tokyo | APGAS libraries for C++, Java and Scala; X10 compiler support for user-defined control constructs, and improvements to X10DT IDE, X10RT transport layer over MPI User-Level Fault Mitigation (ULFM), X10RT transport layer over MPI-3; proxy applications LULESH, MCCK, CoMD | http://x10-lang.org/ |

| 8 | Rosebud | Incomplete research prototype | 3 | 0 | 0 | 200 | 1 | http://svn.rice.edu/r/rosebud. | ||||

| 9 | PolyOpt | Open source | 4 | 5 | 27 | 103 | 3 | N/A | OSU, INRIA, UCLA, UIUC, CSU,... | Polyhedral optimizer for ROSE, inc. high-order stencil optimizations for CPUs. | http://hpcrl.cse.ohio-state.edu/wiki/index.php/Polyhedral_Compilation | |

| 10 | Verification Tools (CodeThorn) | Released within ROSE distribution, currently used for multiple international verification compititions, winning 3rd, 2nd, 1st, and 1st, over the last four years. | http://www.roseCompiler.org | |||||||||

| 11 | Bamboo | Open source translator and run time for convertingMPI source to data driven formulation that hides communication | 4 | 1 | 1 | NYU | Bamboo Translator for C++ | http://cseweb.ucsd.edu/groups/hpcl/scg/BambooWebsite | ||||

| 12 | Open Fortran Project | Open source Fortran compiler front end. Used by: 1. ROSE (Fortran parser); 2. Open MPI (tool generation); 3. TAU Performance Tools (Fortran parser); 4. OpenCoarrays (coarray to library-call transformations); 5. University of Oregon (MATLAB MEX file generator); 6. EDX (Fortran to C# conversions). | 7 | 1 | 18 | 318 | 4 | https://github.com/OpenFortranProject/ofp-sdf | ||||

| 13 | http://people.csail.mit.edu/asolar | http://homes.cs.washington.edu/~emina/rosetta | ||||||||||

| 14 | DTEC Web Site: | http://portal.nersc.gov/ project/rosecompiler/dtec/wordpress/ | ||||||||||

| 15 | Formal Verification | Coq proofs of stencil algorithms and programs | 1 | 2 | ||||||||

| 16 | AMRStencil | C++11 Embedded Domain Specific Language for Stencil-based Adaptive Mesh Refinement algorithms. | 4 | 8 | 20 | 5 | LBNL, LLNL | AMRStencil API. Reference Manual. Multigrid Example. Euler's Equation example. | svn repo https://anag-repo.lbl.gov/svn/AMRStencil | |||