Traleika Glacier

From Modelado Foundation

| Traleika Glacier X-Stack | |

|---|---|

| |

| Team Members | Intel, Reservoir Labs, ETI, UDEL, UC San Diego, Rice U., UIUC, PNNL |

| PI | Shekhar Borkar (Intel) |

| Co-PIs | Wilf Pinfold (Intel), Richard Lethin (Reservoir Labs), TBD (ETI), Guang Gao (UDEL), Laura Carrington (UC San Diego), Vivek Sarkar (Rice U.), David Padua (UIUC), Josep Torrellas (UIUC), John Feo (PNNL) |

| Website | https://www.xstackwiki.com/index.php/Traleika_Glacier |

| Download | {{{download}}} |

Team Members

- Intel: Shekhar Borkar (PI); Hardware guidance, HW/SW co-design, resiliency, technical management

- Reservoir Labs: Richard Lethin (PI); Programming system, R-Stream, tools, optimization

- ET International (ETI): PI TBD ; Simulators, execution model and runtime support

- University of Delaware (UDEL): Guang Gao (PI); Execution model research

- University of California, San Diego (UC San Diego): Laura Carrington (PI); Applications

- Rice University: Vivek Sarkar (PI); Programming system, runtime system

- University of Illinois at Urbana-Champaign (UIUC): David Padua, Josep Torrellas (PIs); Programming system, Hierarchical Tiles Arrays (HTA), architecture, system architecture evaluation

- Pacific Northwest National Laboratory (PNNL): John Feo (PI); Kernels and proxy apps for evaluation

Goals and Objectives

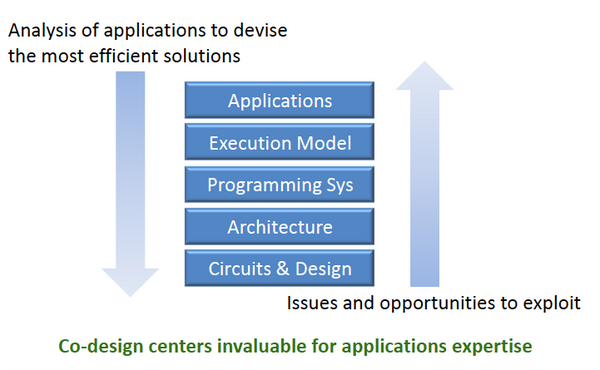

Goal: The Traleika Glacier X-Stack program will develop X-Stack software components in close collaboration with application specialists at the DOE co-design centers and with the best available knowledge of the Exascale systems we anticipate will be available in 2018/2020.

Description: Intel has built a straw-man hardware platform that embodies potential technology solutions to well understood challenges. This straw-man is implemented in the form of a simulator that will be used as a tool to test software components under investigation by Traleika team members. Co-design will be achieved by developing representative application components that stress software components and platform technologies and then use these stress tests to refine platform and software elements iteratively to an optimum solution. All software and simulator components will be developed in open source facilitating open cross team collaboration. The interface between the software components and the simulator will be built to facilitate back end replacement with current production architectures (MIC and Xeon) providing a broadly available software development vehicle and facilitating the integration of new tools and compilers conceived and developed under this proposal with existing environments like MPI, OpenMP, and OpenCL.

The Traleika Glacier X-Stack team brings together strong technical expertise from across the exascale software stack. Utilizing applications of high interest to the DoE from five National Labs, coupled with software systems expertise from Reservoir Labs, ET International, the University of Illinois, University of California San Diego, University of Delaware, and Rice University, using a foundation of platform excellence from Intel. This project builds collaboration between many of the partners making this team uniquely capable of rapid progress. The research is not only expected to further the art in system software for high performance computing but also provide invaluable feedback thru the co-design loop for hardware design and application development. By breaking down research and development barriers between layers in the solution stack this collaboration and the open tools it produces will spur innovation for the next generation of high performance computing systems.

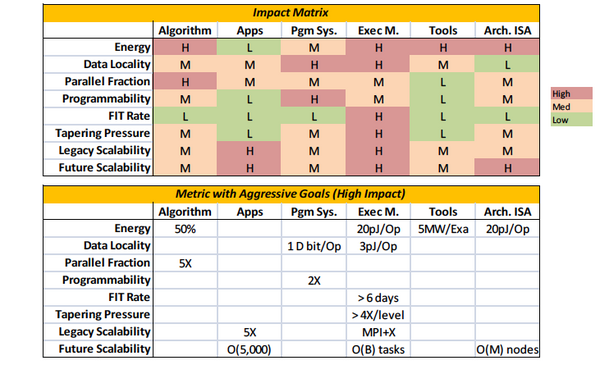

Objectives:

- Energy efficiency: SW components interoperate, harmonize, exploit HW features, and optimize the system for energy efficiency

- Data locality: PGM system & system SW optimize to reduce data movement

- Scalability: SW components scalable, portable to O(109)—extreme parallelism

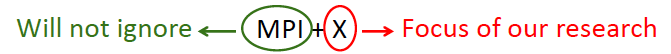

- Programmability: New (Codelet) & legacy (MPI), with gentle slope for productivity

- Execution model: Objective function based, dynamic, global system optimization

- Self-awareness: Dynamically respond to changing conditions and demands

- Resiliency: Asymptotically provide reliability of N-modular redundancy using HW/SW co-design; HW detection, SW correction

Status Reports

- Traleika Glacier X-Stack Highlights, April 1, 2014

- Traleika Glacier X-Stack Status Review, March 25, 2014

Publications

Intel

- Romain Cledat, Sagnak Tasirlar (Rice University) and Rob Knauerhase (Intel), Programmer Obliviousness is Bliss: Ideas for Runtime-Managed Granularity. To be published at HotPar ’13, June 24, 2013, San Jose, CA - https://www.usenix.org/conference/hotpar13

- Shekhar Borkar, How to stop interconnects from hindering the future of computing!, Optical interconnects Conference, May 2013

- Shekhar Borkar, Exascale Computing—a fact or a fiction?, IPDPS, May 2013

- Functional Simulator for Exascale System Research, Romain Cledat (Intel), Josh Fryman (Intel), Ivan Ganev (Intel), Sam Kaplan (ETI), Rishi Khan (ETI), Asit Mishra (Intel), Bala Seshasayee (Intel), Ganesh Venkatesh (Intel), Dave Dunning (Intel), Shekhar Borkar (Intel), Workshop on Modeling & Simulation of Exascale Systems & Applications, September 18th-19th, 2013, University of Washington, Seattle, WA - http://hpc.pnl.gov/modsim/2013/

University of Delaware

- Joshua Suetterlein, Stephane Zuckerman, and Guang R. Gao, An Implementation of the Codelet Model. To be published in the proceedings of the 19th International European Conference on Parallel and Distributed Computing (EuroPar 2013), August 26-30, Aachen, Germany.

- Chen Chen, Yao Wu, Stephane Zuckerman, and Guang R. Gao. Towards Memory-Load Balanced Fast Fourier Transformations in Fine-Gain Execution Models. To be published in Proceedings of 2013 Workshop on Multithreaded Architectures and Applications (MTAAP 2013). 27th IEEE International Parallel & Distributed Processing Symposium, May 24, Boston, MA, USA.

- Aaron Myles Landwehr, Stephane Zuckerman, Guang R. Gao. Toward a Self-Aware System for Exascale Architectures. CAPSL Technical Memo 123, June 2013.

Rice University

- Integrating Asynchronous Task Parallelism with MPI. Sanjay Chatterjee, Sağnak Taşırlar, Zoran Budimlić, Vincent Cavé, Millind Chabbi, Max Grossman, Yonghong Yan and Vivek Sarkar. 27th IEEE International Parallel & Distributed Processing Symposium (IPDPS 2013), May 2013, Boston, MA.

- Compiler Optimization of an Application-specific Runtime, Kathleen Knobe (Intel) and Zoran Budimlić (Rice), CPC 2013: 17th Workshop on Compilers for Parallel Computing, July 3-5, 2013, Lyon, France. (to appear).

- Compiler Optimization of an Application-specific Runtime. Kathleen Knobe (Intel) and Zoran Budimlic (Rice). In Compilers for Parallel Computers (CPC), July 2013.

- Compiler Optimization of an Application-specific Runtime. Kathleen Knobe (Intel) and Zoran Budimlic (Rice). Abstract to appear in CnC'13 workshop, September 2013.

- Automatic Selection of Distribution Functions for Distributed CnC, Kamal Sharma (Rice), Kathleen Knobe (Intel), Frank Schlimbach (Intel), Vivek Sarkar (Rice). Abstract to appear in CnC'13 workshop, September 2013.

- CnC on Open Community Runtime, Alina Sbirlea (Rice) and Zoran Budimlic (Rice). Abstract to appear in CnC'13 workshop, September 2013.

- Bounded Memory Scheduling of CnC Programs, Dragos Sbirlea (Rice), Zoran Budimlic (Rice) and Vivek Sarkar (Rice). Abstract to appear in CnC'13 workshop, September 2013.

- CDSC-GL: A CnC-inspired Graph Language, Zoran Budimlic (Rice), Jason Cong (UCLA), Zhou Li (UCLA), Louis-Noel Pouchet (UCLA), Vivek Sarkar (Rice), Alina Sbirlea (Rice), Mo Xu (UCLA), Pen Zhang (UCLA). Abstract to appear in CnC'13 workshop, September 2013.

- Bounded memory scheduling of dynamic task graphs, Dragos Sbirlea, Zoran Budimlić, Vivek Sarkar, submitted to IPDPS 2014.

CnC’13 workshop September, 2013

The following were presented at the CnC’13 workshop September, 2013. This was the fifth annual CnC workshop. It was co-located with Languages and Compilers for Parallel Systems (LCPC) in Santa Clara, CA.

- Compiler Optimization of an Application-Specific Runtime. Kathleen Knobe (Intel) and Zoran Budimlic (Rice). *

- The CnC tuning capability, Sanjay Chatterjee (Rice), Zoran Budimlic (Rice), Vivek Sarkar (Rice), Kathleen Knobe (Intel).

- Automatic Selection of Distribution Functions for Distributed CnC, Kamal Sharma (Rice), Kathleen Knobe (Intel), Frank Schlimbach (Intel), Vivek Sarkar (Rice)*.

- CnC on Open Community Runtime, Alina Sbirlea (Rice) and Zoran Budimlic (Rice).

- Bounded Memory Scheduling of CnC Programs, Dragos Sbirlea (Rice), Zoran Budimlic (Rice) and Vivek Sarkar (Rice). *

- CDSC-GL: A CnC-inspired Graph Language, Zoran Budimlic (Rice), Jason Cong (UCLA), Zhou Li (UCLA), Louis-Noel Pouchet (UCLA), Vivek Sarkar (Rice), Alina Sbirlea (Rice), Mo Xu (UCLA), Pen Zhang (UCLA).*

- Implementing Asynchronous Checkpoint/Restart for CnC, Nick Vrvilo and Vivek Sarkar (Rice University) Kath Knobe and Frank Schlimbach(Intel)

- Automatic CnC generation from a sequential specification, Nicolas Vasilache (Reservoir Labs, Inc.)

Note: Asterisked (*) presentations are supportive of the Traleika Glacier X-Stack strategic aims and objectives but not directly under the statement of work.

Joint Publications

- Compiler Support for Software Cache Coherence, Sanket Tavarageri, Wooil Kim, Josep Torrellas, and P Sadayappan Pacific Northwest National Labs (John Feo, Andres Marquez), submitted for publication.

- ASAFESSS: A Scheduler-Driven Adaptive Framework for Extreme Scale Software Stacks, St. John, T. et al, 4th International Workshop on Adaptive Self-tuning Computing Systems 2014, Vienna Austria. (Best paper award).

Presentations and Other Collateral

- Birds-of-a-Feather session at SuperComputing12, November 14, 2012. See the OCR homepage at https://01.org/projects/open-community-runtime.

- Traleika Glacier X-Stack Overview, presented by Laura Carrington (UCSD) at the Fourth ExaCT All Hands Meeting, Sandia National Laboratories, May 14, 2013

- The Open Community Runtime Framework for Exascale Systems, Birds of a Feather Session, SC13, Denver, November 19, 2013, Vivek Sarkar (Rice), Rob Knauerhase (Intel), Rich Lethin (Reservoir Labs)

- Experience developing CnC versions of DOE Applications - Ellen Porter (PNNL), Kath Knobe (Intel), John Feo (PNNL) - 4/15/14

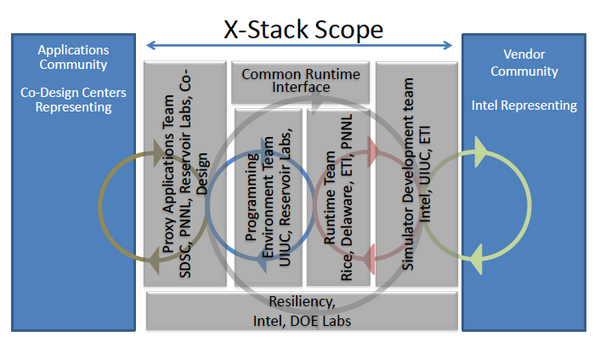

Scope of the Project

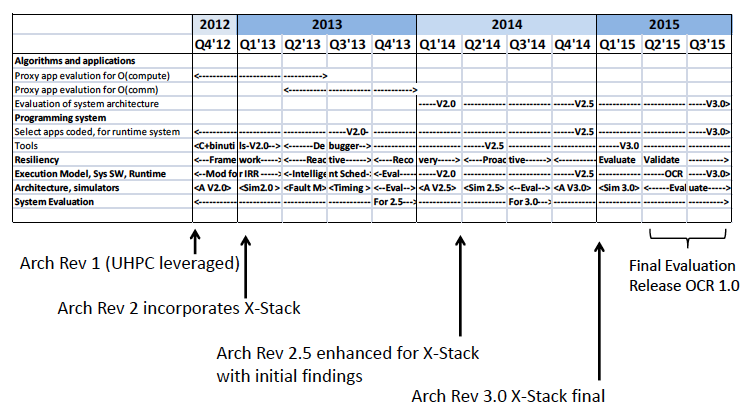

Roadmap

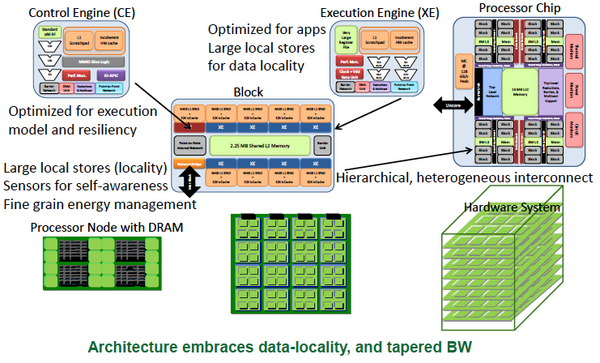

Architecture

Straw-man System Architecture and Evaluation

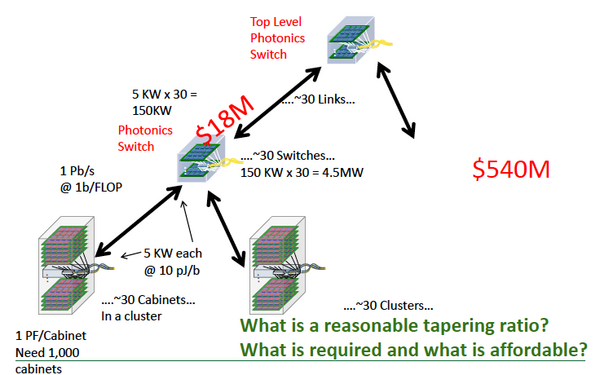

Data-locality and BW Tapering, Why So Important?

Programming and Execution Models

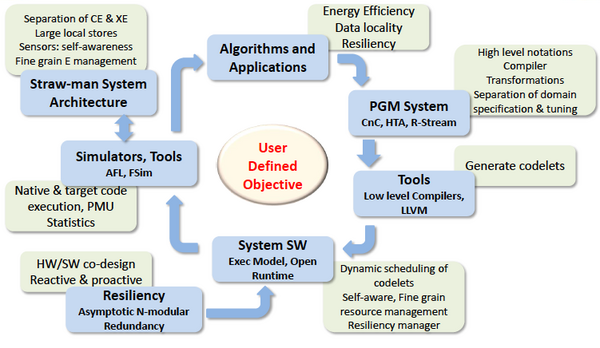

Programming model

- Separation of concerns: Domain specification & HW mapping

- Express data locality with hierarchical tiling

- Global, shared, non-coherent address space

- Optimization and auto generation of codelets (HW specific)

Execution model

- Dataflow inspired, tiny codelets (self contained)

- Dynamic, event-driven scheduling, non-blocking

- Dynamic decision to move computation to data

- Observation based adaption (self-awareness)

- Implemented in the runtime environment

Separation of concerns

- User application, control, and resource management

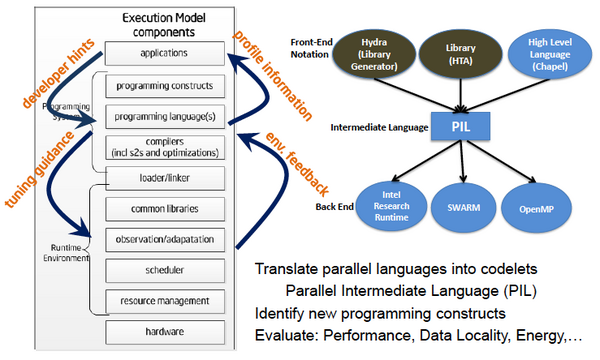

Programming System Components

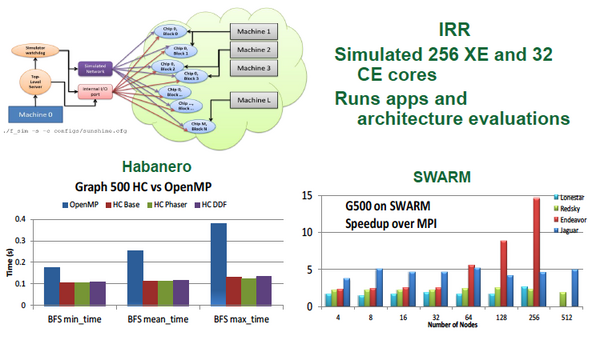

Runtime

- Different runtimes target different aspects

- IRR: targeted for Intel Straw-man architecture

- SWARM: runtime for a wide range of parallel machines

- DAR3TS: explore codelet PXM using portable C++

- Habanero-C: interfaces IRR, tie-in to CnC

- All explore related aspects of the codelet Program Exec Model (PXM)

- Goal: Converge towards Open Collaborative Runtime (OCR)

- Enabling technology development for codelet execution

- Model systems, foster novel runtime systems research

- Greater visibility through SW stack -> efficient computing

- Break OS/Runtime information firewall

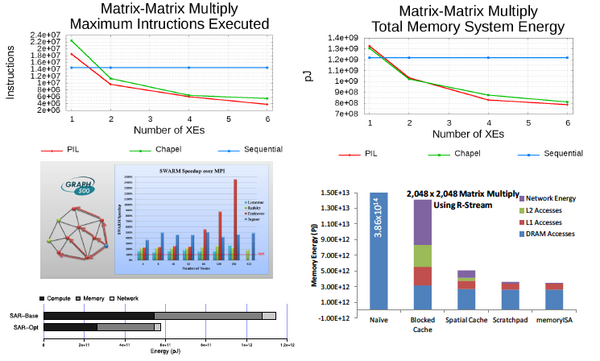

Some Promising Results:

Runtime Research Agenda

- Locality aware scheduling—heuristics for locality/E-efficiency

- Extensions to standard Habanero-C runtime

- Adaptive boosting and idling of hardware

- Avoid energy expensive unsuccessful steals that perform no work

- Turbo mode for a core executing serial code

- Fine grain resource (including energy) management

- Dynamic data-block movement

- Co-locate codelets and data

- Move codelets to data

- Introspection and dynamic optimization

- Performance counters, sensors provide real time information

- Optimization of the system for user defined objective

- (Go beyond energy proportional computing)

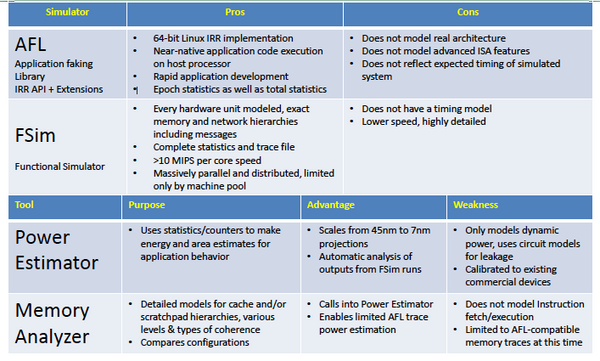

Simulators and Tools

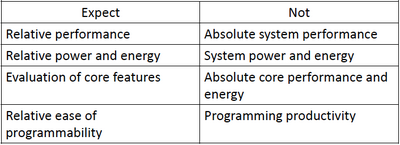

Simulators—what to expect and not

- Evaluation of architecture features for PGM and EXE models

- Relative comparison of performance, energy

- Data movement patterns to memory and interconnect

- Relative evaluation of resource management techniques

Results Using Simulators

Applications and HW-SW Codesign

X-Stack Components