DynAX: Difference between revisions

From Modelado Foundation

imported>Infinoid →Deliverables: Link to HTA deep dive presentation |

imported>Infinoid →Deliverables: Post final version of Y2 report |

||

| Line 194: | Line 194: | ||

* [[media:BrandywineXStackReportQ6.pdf|Q6 Report]] | * [[media:BrandywineXStackReportQ6.pdf|Q6 Report]] | ||

* [[media:TCE slides.pdf|TCE Presentation]] | * [[media:TCE slides.pdf|TCE Presentation]] | ||

'''Q7 (6/1/2014)''' | '''Q7 (6/1/2014)''' | ||

* [[media:DynAXXStackQ7Report.pdf|Q7 Report]] | * [[media:DynAXXStackQ7Report.pdf|Q7 Report]] | ||

* [[media:Dynax_Deep_Dive_Presentation_-_HTA.pdf|HTA Deep Dive presentation]] | * [[media:Dynax_Deep_Dive_Presentation_-_HTA.pdf|HTA Deep Dive presentation]] | ||

'''Year 2 report''': [[media:DynAXX-StackYear2report.pdf|Y2 Report]] | |||

Revision as of 20:00, May 29, 2014

| DynAX | |

|---|---|

| |

| Team Members | ETI, Reservoir Labs, UIUC, PNNL |

| PI | Guang Gao (ETI) |

| Co-PIs | Benoit Meister (Reservoir Labs),

David Padua (UIUC), John Feo (PNNL) |

| Website | www.etinternational.com/xstack |

| Download | {{{download}}} |

Dynamically Adaptive X-Stack or DynAX is a team led by ET International to conduct a research on runtime software for exascale computing.

Moving forward, exascale software will be unable to rely on minimally invasive system interfaces to provide an execution environment. Instead, a software runtime layer is necessary to mediate between an application and the underlying hardware and software. This proposal describes a model of execution based on codelets, which are small pieces of work that are sequenced by expressing their interdependencies to runtime software instead of relying on the implicit sequencing of a software thread. In addition, this document will also describe interactions between the runtime layer, compiler, and programming language.

The runtime software for exascale computing must be able to deal with a very large amount of outstanding work at any given time and manage enormous amounts of data, some of which may be highly volatile. The relationship between work and the data it acts upon or generates is crucial to maintaining high performance and low power usage. A poor understanding of data locality may lead to a much higher amount of communication, which is extremely undesirable in an exascale environment. To assist it in associating work with data and facilitating the migration of work to data and vice versa, such a runtime may impose a hierarchy on regions of the system, dividing it up along address space and privacy boundaries to allow it to guess at the cost inherent in communicating between regions. Furthermore, tying data and work to locations in the hierarchy creates a construct by which transparent work stealing and sharing may be applied, helping to keep stolen work near its data and allowing shared work to be issued to specific regions.

Compilers also need to reflect the requirements of exascale computing systems. A compiler that supports a codelet execution model must be able to determine appropriate boundaries for codelets in software and generate the codelets and code to interface with both the runtime and a transformed version of the input program. We propose that a three-step compilation process be used, wherein program code is compiled down to a high-level-language-independent internal representation, which can then be compiled down to C code that makes API calls into runtime software. This C code can then be compiled down to a platform-specific binary for execution on the target system, using existing C compilers for the generated sequential code. Higher-level analysis of the relationship between codelets and data can be performed in earlier steps, and this can enable the compiler to emit static hints to the runtime to assist in making decisions on scheduling and placement. Compilers can also assist in providing for fault tolerance by supporting containment domains, which can be used by the runtime software to assist in program checkpointing.

This work will be done in the context of DOE co-design applications. We will use kernels of these applications as well as other benchmarks and synthetic kernels in the course of our research. The needs of the co-design applications will provide valuable feedback to the research process.

Team Members

- ET International (ETI): Execution Model, Runtime Systems, Parallel Intermediate Language, Resilience

- Reservoir Labs: Programming Models, Loop Optimizations

- University of Illinois at Urbana-Champaign (UIUC): High level data structures and algorithms for parallelism and locality

- Pacific Northwest National Laboratory (PNNL): Co-design and NWChem kernels for evaluation, energy efficiency

Objectives

Scalability: Expose, express, and exploit O(10^10) concurrency

Locality: Locality aware data types, algorithms, and optimizations

Programmability: Easy expression of asynchrony, concurrency, locality

Portability: Stack portability across heterogeneous architectures

Energy Efficiency: Maximize static and dynamic energy savings while managing the tradeoff between energy efficiency, resilience, and performance

Resilience: Gradual degradation in the face of many faults

Interoperability: Leverage legacy code through a gradual transformation towards exascale performance

Applications: Support NWChem

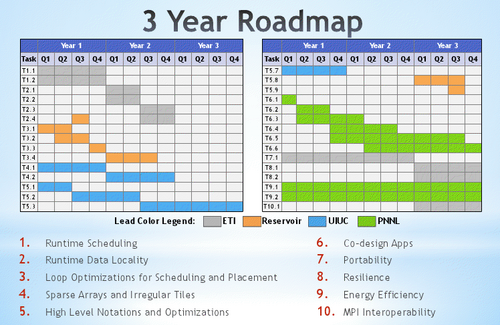

Roadmap

Impact

Scalability:

- Expose, express and exploit new forms of parallelism

- Provide mechanisms of task scheduling across the system as if it were one system rather than many disparate pieces

- Symmetric access semantics across heterogeneous devices

Locality:

- Provide mechanisms to express locality as a first-class citizen

- Expose the memory hierarchies to the compiler (and programmer)

- Provide data types and memory models so that the programmer can view the system as one system instead of many disparate memories

Programmability:

- Create easier ways of expressing asynchrony thereby enabling programmers to write more scalable programs

- R-Stream will automatically extract parallelism and locality from common idioms

- Provide data types and algorithms that provide high-level representations of arrays mapped to the memory and algorithm hierarchy for automatic parallelization and data placement

Portability:

- Demonstrate a software stack that is portable to multiple architectures provided a C compiler

- Support a platform abstraction layer in SWARM, which will allow it to operate on multiple heterogeneous architectures

- Work with Xpress on the XPI interface to show application portability between runtime systems

Energy Efficiency:

- Collocate execution and data

- Dynamically load balance execution based on resource availability

- Dynamically scale resources based on load

- Provide new programming constructs (Rescinded Primitive Data Types) that allow compressed data formats at higher memory levels to minimize data transfer costs

Resilience:

- Integrate containment domains and their extensions into the SWARM runtime system and SCALE compiler

- Allow graceful degradation in the face of exascale-level faults and a framework for software validation of soft faults

Interoperability:

- Work with Xpress on XPI interoperability with legacy codes such that all X-Stack runtime systems and all X-Stack applications can benefit from Evolutionary/Revolutionary runtime system interoperability

Applications:

- Provide NWChem kernels and expertise to all X-Stack projects

- Use Co-Design and NWChem applications to evaluate the Brandywine Team Software Stack

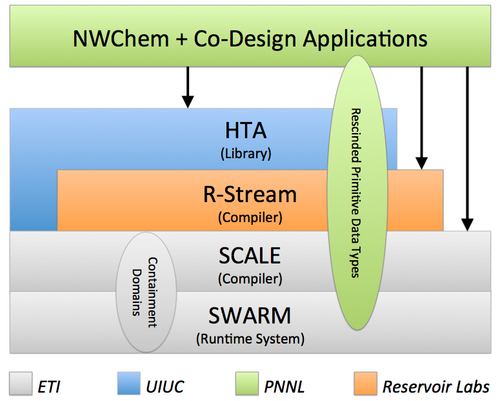

Software Stack

The X-Stack software stack consists of high level data objects and algorithms (HTA: Hierarchical Tiled Arrays), R-Stream loop optimizing compiler, SCALE parallel language compiler, and SWARM distributed heterogeneous runtime system. The project will extend the existing software tools to improve on parallelism, locality, programmability, portability, energy efficiency, resilience, and interoperability (see left). In addition, it will add new infrastructure for energy efficiency (Rescinded Primitive Data Types) and resilience (Containment Domains).

SWARM (SWift Adaptive Runtime Machine)

- Codelets

- Basic unit of parallelism

- Nonblocking tasks

- Scheduled upon satisfaction of precedent constraints

- Hierarchical Locale Tree: spatial position, data locality

- Lightweight Synchronization

- Asynchronous Split-phase Transactions: latency hiding

- Message Driven Computation

- Control-flow and Dataflow Futures

- Error Handling

- Active Global Address Space (planned)

- Fault tolerance (planned)

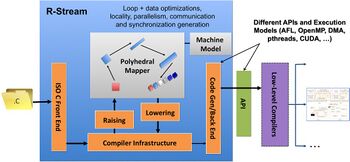

R-Stream

- Current capabilities:

- Automatic parallelization and mapping

- Heterogeneous, hierarchical targets

- Automatic DMA/comm. generation/optimization

- Auto-tuning tile sizes, mapping strategies, etc.

- Scheduling with parallelism/locality layout tradeoffs

- Corrective array expansion

- Planned capabilities:

- Extend explicit data placement

- Generation of parallel codelet codes from serial codes

- Generation of SCALE IR and tuning hints on scheduling and data placement

- Automatic mapping of irregular mesh codes

Hierarchical Tiled Arrays

- HTAs are recursive data structure

- Tree structured representation of memory

- Includes library of operations to enable the programming of codelets in the familiar notation of C/C++

- Represent parallelism using operations on arrays and sets

- Represent parallelism using parallel constructs such as parallel loops

- Compiler optimizations on sequences of HTA operations will be evaluated

Rescinded Primitive Data Type Access

- Redundancy removal to improve performance/energy

- Communication

- Storage

- Redundancy addition to improve fault tolerance

- High Level fault tolerant error correction codes and their distributed placement

- Placeholder representation for aggregated data elements

- Memory allocation/deallocation/copying

- Memory consistency models

NWChem

- DOE’s Premier computational chemistry software

- One-of-a-kind solution scalable with respect to scientific challenge and compute platforms

- From molecules and nanoparticles to solid state and biomolecular systems

- Open-source has greatly expanded user and developer base (ECL 2.0)

- Worldwide distribution (70% is academia)

- Ab initio molecular dynamics runs at petascale

- Scalability to 100,000 processors demonstrated

- Smart data distribution and communication algorithms enable hybrid-DFT to scale to large numbers of processors

Deliverables

Q1 (12/1/2012)

Q2 (3/1/2013)

March 2013 PI Meeting: PI Meeting presentation

EXaCT All-hands meeting: DynAX Presentation

Q3 (6/1/2013)

- Q3 Report

- NWChem SCF code download version 2

- PIL Design v0.4

- Tensor Contraction Engine (OpenMP, CUDA, C, Fortran)

Year 1 report: Y1 Report

Q4 (9/1/2013)

Q5 (12/1/2013)

Q6 (3/1/2014)

Q7 (6/1/2014)

Year 2 report: Y2 Report